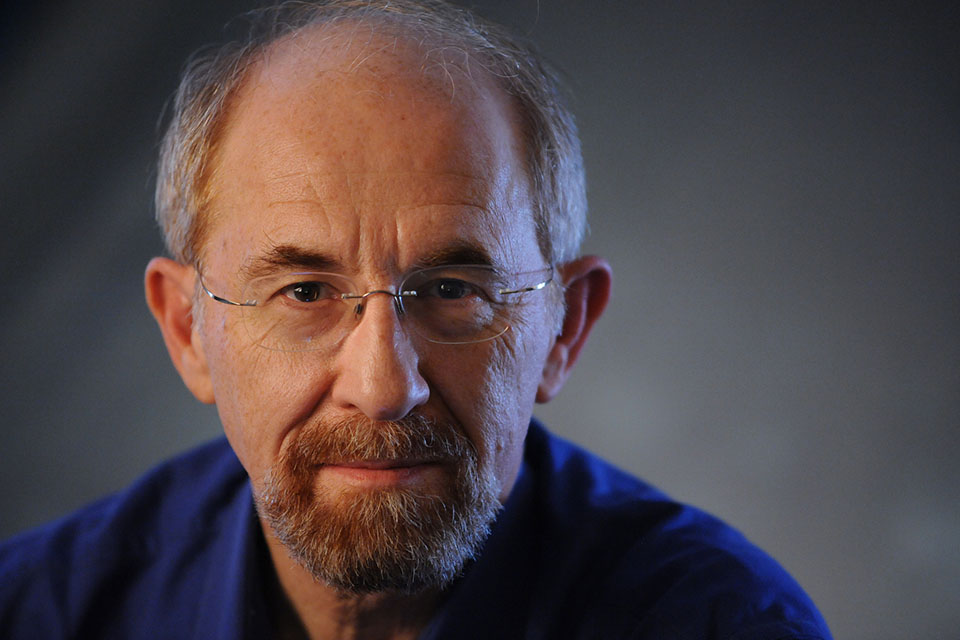

Henry Fuchs (PhD, Utah 1975) is the Federico Gil Distinguished Professor of Computer Science and Adjunct Professor of Biomedical Engineering at UNC Chapel Hill. He has been active in computer graphics since the 1970s, with rendering algorithms (BSP Trees), high performance graphics hardware (Pixel-Planes), office of the future, virtual reality, telepresence, and medical applications. He is a member of the National Academy of Engineering, a fellow of the American Academy of Arts and Sciences, recipient of the SIGGRAPH Steven Anson Coons Award, and an honorary doctorate from TU Wien, the Vienna University of Technology.

Just as today’s mobile phones are much more than simply telephones, tomorrow’s Augmented Reality glasses will be much more than simply displays in one’s prescription eyewear. For starters, these glasses will include autofocus capabilities for more comfortable viewing of real-world surroundings than today’s bifocals and progressive lenses. Their internal displays will depth-accommodate the virtual objects for comfortable viewing of the combined real-and-virtual scene. The glasses will include eye-, face-, and body tracking for gaze control, and for user-interface, and for scene capture to support telepresence. The glasses will also display virtually embodied personal assistants next to the user. These assistants will be more useful than today’s Siri and Alexa because they will be aware of the user’s situation, gaze, and pose. These virtual assistants will appear and move around in the user’s real surroundings. Although early versions of these capabilities are starting to emerge in commercial products, widespread impact will occur only when a critical mass of many capabilities are integrated into a single device. This talk will describe several recent experiments and speculate on future directions.

Impossible outside Virtual Reality

Virtual Reality and Augmented Reality to become the primary device for interaction with digital content, beyond the form factor, we need to understand what types of things we can do in VR that would be impossible with other technologies. That is, what does spatial computing bring to the table. For once, the spatialization of our senses. We can enhance audio or proprioception in complete new ways. We can grab and touch objects with new controllers, like never before. Even in then empty space between our hands. But how fast do we adapt to the new sensory experiences?

Avatars are also unique to VR. They represent other humans but can also substitute our own bodies. And we perceive our world through our bodies. Hence avatars also change our perception of our surroundings.

In this presentation we will explore the uniqueness of VR, from perception to avatars and how they can ultimately change our behavior and interactions with the digital content.

Dr Mar Gonzalez Franco is a computer scientist at Microsoft Research. Her expertise and research spans from VR to avatars to haptics and her interests include the further understanding of human perception and behavior. In her research she uses real-time computer graphics an tracking systems to create immersive experiences and study human responses. Prior to pivoting into industrial research she held several positions and completed her studies in leading academic institutions including the Massachusetts Institute of Technology, University College London, Tsinghua University and Universidad de Barcelona. Later she also took roles as a scientist in the startup world, joining Traity.com to build mathematical models of trust. She created and led an Immersive Technologies Lab for the leading aeronautic company Airbus. Over the years her work has been covered by tech and global media such as Fortune Magazine, TechCrunch, The Verge, ABC News, GeekWire, Inverse, Euronews, El Pais, and Vice. She was named one in 25 young tech talents by Business Insider, and won the MAS Technology award in 2019. She continues to serve as a field expert for governmental organizations worldwide such as the US-NSF, the NSERC in Canada and the EU evaluating Future and Emerging Technologies projects.

Bringing VR/AR experiences to Live Sports – Opportunities and Challenges

This presentation will focus on the significant opportunity for VR and AR in the sports industry and use the journey of VOKE VR from startup through acquisition by Intel and subsequent growth to illustrate challenges and successes along the way. The speakers will bring out the nuances of working in the sports industry, bringing new technology to fans, specific VR related technical and production challenges and the future of immersive media in sports and entertainment.

Dr. Uma Jayaram (ASME Fellow) is an entrepreneurial leader and technology executive. Her professional achievements and philosophy have evolved through three distinct spheres of work: leadership of a global engineering team at Intel; entrepreneurship as a co-founder of three start-ups; and academic research, teaching, and mentorship as a professor at Washington State University. The three companies she co-founded were in the areas of VR media experiences for sports/concerts, CAD interoperability, and VR/CAD/AI for enterprise design. The company VOKE VR was acquired by Intel in 2016.

At Intel, as Managing Director and Principal Engineer, Intel Sports, Uma built teams and technologies for high-profile executions such as the first ever Live Olympics VR experience at the Winter Olympics in 2018 and experiences for world-class leagues and teams such as NFL, NBA, PGA, NCAA, and La Liga. The teams continued to evolve technologies for VR and Volumetric/Spatial Computing executions including media streaming & processing, cloud integrations, platform services, off-site NOC services, distribution over CDN, and SDKs for mobile apps and HMDs. Uma’s teams have a very distinctive culture that combines intellectual vitality, disciplined execution, and a strong employee-centric foundation.

Uma has an undergraduate degree in Mechanical Engineering from IIT Kharagpur, a top technical university in India, and was the first woman admitted to the department. She earned her MS and PhD degrees from Virginia Tech, where she recently received a Distinguished Alumna award and currently sits on the advisory board. Uma has received a Lifetime Achievement award from ISAM and the Leadership Excellence in Technology award from the National Diversity Council.

Dr. S. (Jay) Jayaram has driven the digitization and personalization of sports for fans through immersive technologies. Through his influential work in virtual reality over the past 25 years, he has brought VR to a wide spectrum of domains - from live events in sports and concerts to VR for engineering applications and training. He has brought to market highly innovative solutions and products combining virtual reality, immersive environments, powerful user controllable media experiences, and social networks. The work done by his team in Virtual Assembly and Virtual Prototyping in the 90s continues to be widely referenced by groups around the world. Dr. Jay has co-founded several companies including VOKE (acquired by Intel in 2016), Integrated Engineering Solutions, and Translation Technologies. He was also a Professor at Washington State University and co-founded the WSU Virtual Reality Laboratory in 1994. Most recently he was Chief Technology Officer and Chief Product Officer at Intel Sports. He is currently the CEO of a startup, QuintAR, Inc.

Rosie is a 3D animator and Virtual Reality Artist at XR Games, which recently released Angry Birds Movie 2 VR: Under Pressure for PlayStation VR. She brings characters and worlds to life in high speed through her work as a VR artist, covering all areas of the production pipeline, and has a breadth of experience working with a whole range of headsets. She also loves the performative element that comes with creating art in virtual space and has performed live VR paintings at numerous festivals and events, working with high profile clients such as the BBC and Google.

Extended Reality: Use cases and design considerations for AR, MR, and VR

Extended reality refers to a superset of experiences that involve the presentation of digital content with varying degrees of real world visibility (from virtual reality, in which the real world is invisible, to mixed reality systems that integrate digital content into the real world in roughly equal proportions, to systems that show the real world with only light digital augmentations). In addition, today’s augmented reality, mixed reality, and virtual reality systems vary across a number of other criteria (small to large field of view, head-tracked vs. non-tracked, head-worn vs. external display, stereoscopic vs. non-stereoscopic, single focus vs. multi-focus, and their ability to represent occlusions). We will discuss a number of representative examples across the XR spectrum, what kinds of experiences are best suited to the different platforms, and design considerations for each platform—with particular emphasis on how these technologies interact with human perception and cognition.

Brian Schowengerdt is the Co-Founder and Chief Science and Experience Officer of Magic Leap, and an Affiliate Assistant Professor of Mechanical Engineering in the University of Washington's Human Photonics Lab. Schowengerdt received his Bachelor's degree (summa cum laude) in 1997 from the University of California, Davis, with a triple major in psychology, philosophy, and German. He received his Ph.D. (2004, U.C. Davis) in psychology, with an emphasis in cognition and perception, and conducted his doctoral research at the U.W. Human Interface Technology Lab, where he studied display system design, optical engineering, and mechanical engineering in the course of developing mixed reality and virtual reality systems. He is an inventor on more than 100 issued and pending patents, has given numerous plenary and invited presentations at display industry conferences, and has authored a variety of papers on light field displays, novel microdisplays, and human perception. Schowengerdt has served as the Chair of the Display System committee and Program Vice Chair for 3D for the SID International Symposia, and the Program Committee Co-Chair for the Laser Display Conference, and as associate editor of the Journal of the SID and guest editor for Information Display magazine. Since 2000, he has combined knowledge of sensory physiology, optics, and mechanical engineering to develop and miniaturize mixed reality systems matched to the needs of human perceptual systems.

Ken Perlin, a professor in the Department of Computer Science at New York University, directs the Future Reality Lab, and is a participating faculty member at NYU MAGNET. His research interests include future reality, computer graphics and animation, user interfaces and education. He is chief scientist at Parallux, Tactonic Technologies and Autotoon. He is an advisor for High Fidelity and a Fellow of the National Academy of Inventors. He received an Academy Award for Technical Achievement from the Academy of Motion Picture Arts and Sciences for his noise and turbulence procedural texturing techniques, which are widely used in feature films and television, as well as membership in the ACM/SIGGRAPH Academy, the 2020 New York Visual Effects Society Empire Award the 2008 ACM/SIGGRAPH Computer Graphics Achievement Award, the TrapCode award for achievement in computer graphics research, the NYC Mayor's award for excellence in Science and Technology and the Sokol award for outstanding Science faculty at NYU, and a Presidential Young Investigator Award from the National Science Foundation. // He serves on the Advisory Board for the Centre for Digital Media at GNWC. Previously he served on the program committee of the AAAS, was external examiner for the Interactive Digital Media program at Trinity College, general chair of the UIST2010 conference, directed the NYU Center for Advanced Technology and Games for Learning Institute, and has been a featured artist at the Whitney Museum of American Art. He received his Ph.D. in Computer Science from NYU, and a B.A. in theoretical mathematics from Harvard. Before working at NYU he was Head of Software Development at R/GREENBERG Associates in New York, NY. Prior to that he was the System Architect for computer generated animation at MAGI, where he worked on TRON.