EE/CSE 576 Final Project: Recognition/Detection

Assigned: Monday, April 17

Proposal due: Tuesday, May 9

Status reports due: Tuesday, May 23

Final poster presentation: Monday, June 5

Final reports due: Tuesday, June 6

Late due dates: 2 days after the original due dates

Synopsis

For the final project, as with the other projects, we anticipate that people

will work in teams of two or three.

There are two options for this project, which will take roughly four weeks:

- Implement a state-of-the-art research paper from a recent computer

vision conference or journal (CVPR, ICCV, ECCV, or vision parts of NIPS, ICML, SIGGRAPH, SIGGRAPH Asia etc.).

- Complete a short research project (more fun!)

You can devise your own project from scratch, or use one of the ideas

suggested below. In either case, the purpose is to learn more about

recognition or detection and to get a feel for doing research in computer vision.

All projects must have a machine learning component.

Guidelines

Option 1

Start by searching through recent computer vision conference proceedings or

journal articles, and choosing a paper that interests you. The premiere vision

conferences are ICCV, CVPR, ECCV. The premiere journal is Transactions

on Pattern Analysis and Machine Intelligence, and International Journal on Computer Vision. Sometimes computer vision papers also appear in graphics/machine learning

conferences such as NIPS, ICML, SIGGRAPH, SIGGRAPH Asia. We recommend starting with the most recent years, i.e., CVPR 2016, ICCV 2015, ECCV 2016, etc. Most of the papers and project web sites are

linked online from here. You should select a paper that is appropriate for a four-week project. I.e., it should be more involved than one of the class

projects. Our expectation is that you will implement the method yourself rather

than using any code that the authors make available .

Option 2

For this option we'd like you to do a research project with some novelty, i.e.,

something that no one has published before. Naturally we're not expecting

PhD-level research in this amount of time, but since two or three of you will be

working together you should be able to come up with exciting results :)

Following, are some examples of what we have in mind.

- An interesting extension of prior work. In most cases,

we'd recommend implementing the prior method yourself, rather than

downloading implementations available online, as this gives you a better

understanding of how the method works (and you can avoid mucking around with

some one else's code). But this is not a hard and fast rule -- if the

extension is very significant you may use available code.

- A new application of prior work. Apply a known

technique to a new application domain, and evaluate its performance.

- Develop a new solution (hopefully better!) to an

existing problem. If you chose this option, you have to figure out the

solution by the time you submit the proposal, to convince us that your

method will work.

- Pose a new technical problem and solve it. Identify a

new problem for which no known solution exists, devise a solution, and

implement/test it. If you chose this option, you have to figure out both the

problem and solution by the time you submit the proposal.

How ambitious/difficult should your project be? Each team member should count

on committing substantially more effort than on the previous class projects.

Requirements

Proposal

Each team will turn in a one-page proposal describing their project. It

should specify:

- Your team members

- Project goals. Be specific. Describe the input and output.

- Milestones you anticipate reaching along the way.

- Special equipment that will be needed. We may be able to help with

cameras, tripods, etc. (The sooner we know about equipment needs, the

better.)

Each team must submit a proposal, even if you choose one of the research

ideas described below.

Status reports

Each team will post a status report in the middle summarizing progress to date.

Final poster presentation

We will have a poster session at

the end of the quarter, where each group will present their project to the class. Details will be announced closer to the time of the

poster session.

Final Write-up

Turn in a writeup paper (by 11:59pm, June 6) describing your

problem and approach. It should include the following:

- Title and team members

- Short intro

- Related work, with references to papers, web pages

- Technical description including algorithm

- Experimental results

- Discussion of results, strengths/weaknesses, what worked, what didn't.

- Future work and what you would do if you had more time

Turn in format

All the writeups should be submitted through Catalyst dropbox and in the NIPS format.

Project Ideas for Option 2

Here are several ideas related to object detection/recognition and would be appropriate for final research projects. Feel free to choose variations of these or to devise your own research problems that

are not on this list. You can either leverage deep learning or not, depending on your skill set and target. We're happy to meet with you to discuss any of these (or

other) project ideas in more detail.

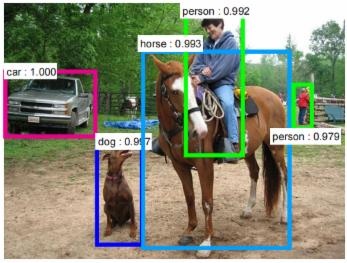

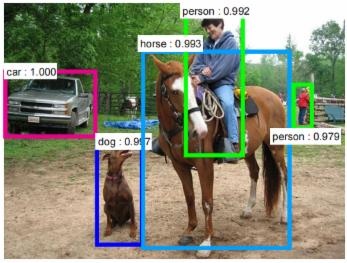

Face/Pedestrian/Vehicle recognition. These are perhaps the most widely used object recognition scenarios. You will build your own detection framework and try it on a standard benchmark dataset. Example research problems include how to balance between speed and accuracy, and how object scale, density or lightning codition affect the detection resutls etc.

Tracking by detection. One classic approach to track a moving object is to remember it and then find it. The object model and tracking status are updated contineously along the video frames, so that appearance change, occlusion status etc. can be handeled. You will build an online object tracking framework and perhaps give a demo during our poster session.

Object re-identification. It is common that an object appears in different cameras with different viewpoint, zoom or lighting conditions. Object re-identification exactly finds such matches and enables many surveillance applications such as finding the criminals. You will design your object re-id pipeline and compare with several state-of-the-art approaches.

Product description. Given a product image, can you generate a paragraph describing the object? You can also develop projects that connect computer vision and natural language processing.

License plate recognition serves as a critical part in many surveillance applications. A typical routine for LPR includes license plate localization, character segmentation and character recognition. However, building a robust and fast LPR system is still very challenging. You will implement your own LPR approach and may try something different. You should demo your LPR system during the poster session.

Human pose/hand gesture recognition is popular in many medical/HCI applications. The basic idea of such articulated object recognition however is similar - finding object parts and their best combination.

Instance recognition. Object instance recognition attracts increasing interest recently; it tries to recognize different object instances that belong to the same class, for example, different kinds of dogs, birds or cars. Practially this is useful for warehouse management, robotics, traffic surveillance etc. You will read state-of-the-art papers, work on a standard instance recognition dataset and try your approach.

Cancer biopsy diagnosis. The task is to classify a given region of interest (ROI) from a whole slide biopsy to one of the four diagnostic categories: benign, atypia, DCIS and invasive. There are 428 ROIs marked and diagnosed by expert pathologists. ROIs have different sizes and shapes but each has only one diagnostic label. You can use different approaches to overcome size differences: sliding windows, resizing etc.