Getting Started

Requirements

Get access to a computer with Qt 5.12 or above installed and at least OpenGL 4.1. You can either work in the dedicated instructional lab CSE 002 (Allen), or from home.

We will be using Gitlab for version control, distribution and collection of projects. Log onto it if you have not before, using your CSE account preferably. When each project is assigned, a repository will be created for you with the skeleton code ready to clone and build.

If you are working in the labs, make sure to clone your repository into the shared Z drive, failure to do so can result in confusing build/compiling issues from QT.

-

You can either use command-line Git, or install a GUI like SourceTree which helps a lot with visualization of commits, merges, branches, and is easy to use if you are not a Git expert. If you’re unfamiliar with Git or need a refresher, the official website has a great e-book on Git and Version Control Systems in general.

Working from home

-

You will need a compiler to work with Qt.

- On Windows, you can install Visual Studio 2017 and use msvc.

- On Mac, you can install XCode and use Clang.

On Linux, you can use g++.

If you wish to work from home, you can install an open-source version of Qt from their website. You can choose to skip when it prompts you to signup for an account. You can also use any OpenGL viewer (like GL-Z) to check which version of OpenGL your computer supports.

Install Git if it’s not installed already.

While you can use any IDE you want (qmake can generate Makefiles and Visual Studio solutions), we officially support and recommend Qt Creator (which comes installed with Qt) and will provide instructions assuming you are using Qt Creator.

If you are working on Windows, you probably need to follow these instructions to set up a debugger.

WARNING: You should install a compiler before you install Qt so that Qt can automatically pick up on your compiler installation

When a project is assigned

As soon as possible, clone the repository and make sure the skeleton code builds and runs fine. If you run into problems, contact us immediately. You are out of luck if you wait until the last day to tell us you are having issues.

Project Submission

Submitting your assignment

Edit the README.md to answer the questions presented. Make sure to describe any bells, whistles, and anything out of the ordinary you did for the project. This will help us grade your project and make sure you receive all the credit you deserve.

-

When you have made sure you committed and pushed everything to your Gitlab repository, tag your commit with SUBMIT, and push the tag. We will run a script to clone all repositories shortly after the due date and we will be grading your project based on which commit you tag. You will be able to see on Gitlab whether you have done so successfully. To tag a commit, simply do the following:

git tag SUBMIT

git push --tags

In this screenshot, under Repository->Tags, the commit “test commit” is the one tagged for submission.

After you’ve done this, please clone your repo into a separate directory on a Windows 10 machine (ideally the Allen 002 ones as we will be grading on those) and make sure it runs fine. If we build your submission and it does not run on grading day, you will be penalized.

Submitting your artifact

After each assignment, you are required to submit an ‘artifact’ that is created with your project and shows off the features of your program.

You will lose points if you do not submit something. We will not grade on artistic merit. However, after everyone submits their artifacts, we will create a gallery on the course website where you will vote on your favorite artifacts. The winner and runners-up will receive a small amount of extra credit. So be sure to try your best!

The link to submit the artifact will be available on the project pages after the code submission deadline. We will also be sending out reminders.

OpenGL

What is OpenGL?

OpenGL sits between the graphics hardware and the application, providing a standard, cross-platform API for hardware accelerated rendering. There are a few other popular rendering technologies, such as Microsoft’s DirectX, Apple’s Metal, and the more recent Vulkan. But we will be using OpenGL because it is supported on Windows, Mac, and Linux.

In the first project, you will be writing OpenGL code that draws primitives like triangles and lines to emulate brush strokes. In the second project, you will be writing OpenGL shaders that affect describe how the GPU colors your 3d scene and models. At any time, you are free to explore OpenGL on your own and go above and beyond to extend the program to suit your own needs.

How does it work?

Graphics hardware used to be very specific and non-flexible, a fixed function pipeline that output pixels to the screen. But these days, many stages of this pipeline are programmable (shaders) and we can even perform arbitrary massively parallel computations on the GPU.

OpenGL operates like a state machine with global variables. When something is changed, it stays changed until something else changes it again. All the state associated with an instance of OpenGL is stored in an OpenGL Context. However, while the OpenGL API is defined as a standard, the creation of an OpenGL Context is not. Every operating system does it differently, so we want to use a windowing toolkit like Qt or FLTK that handles cross-platform context creation automatically, as well as provide a GUI framework.

Since there is no single implementation of OpenGL, graphics hardware vendors need to supply their own implementation of OpenGL with the graphics drivers, necessitating the step of loading OpenGL functions dynamically. It would be an enormous pain to do this by hand, so instead loading libraries are used such as GLEW.

Graphics hardware does not understand higher level primitives such as circles and rectangles. It only understands how to draw things like points, lines, and triangles. A 3d sphere drawn on the GPU is actually a whole bunch of triangles that approximate a sphere. Each primitive has one (point), two (line), or three (triangle) vertices that you can attach any data you would like, such as a 3d position, or intrinsic color.

Specifics

The set of all data and data types belonging to a vertex is defined as the Vertex Format. The vertices of these basic primitives are stored in something called a Vertex Buffer, which is really just a GPU allocated piece of memory. You can store all the data for each vertex in separate vertex buffers, for example: one vertex buffer for 3d positions, another for intrinsic colors. Or you can store it interleaved in one giant vertex buffer. Everything required to render a specific set of primitives is saved in a Vertex Array Object.

A lot of OpenGL functions operate on the notion of a global “current” something that is “bound.” For instance, there is a global GL_ARRAY_BUFFER variable which must have a Vertex Buffer bound to it before you can do anything with the GL_ARRAY_BUFFER like upload data to it.

OpenGL manages its own memory. OpenGL objects are represented by unique integers. There are a whole slew of glGenX functions that basically request for a GPU resource to be allocated and you are given its ID in return. However you must also remember to ask OpenGL to delete those resources when they are not used anymore.

Resources

If you would like to know more about OpenGL and how it works, you can read a lot more about it on the Khronos OpenGL Wiki.

We provide most of the boilerplate OpenGL code required to run the program because we believe that would take too long and would not be a very good use of time. We would rather that time be spent towards learning higher level graphics concepts and trying out cool bells and whistles. If you would like to learn more about OpenGL, this website goes through how to start drawing things from scratch.

Qt

What is Qt?

Qt is a walled garden for cross-platform C++ native application development. We will primarily be using it for its rich windowing toolkit to make powerful UI and manage OpenGL Contexts. Qt comes with two primary tools. Qt Creator is an IDE, and Qt Designer which is integrated into Qt Creator creates UI forms visually. Qt is very popular and widely used in industry and acamedia. You can read more about it and their documentation.

How do I get started?

Simply open Qt and find the .pro project file in the root directory which will open the project and all sub-projects.

It will ask you to create a build-configuration. Simply hit okay and you are ready to go. Select either Debug or Release build mode and hit run to build.

If you ever need to add a file or remove a file, those changes need to be reflect in the .pro file. Doing operations through Qt Creator typically applies those automatically to the .pro file, while operations done outside of Qt require you to manually edit the .pro file, and re-run qmake manually.

C++ Resources

C++ Language Tutorial - useful for quick reference

Thinking in C++ and Thinking in C by Bruce Eckel

-

CSE303 Spring 2009

-

CSE303 Winter 2010

Video Editing with Blender

Blender is a free and open source 3D creation suite. It supports the entirety of the 3D pipeline—modeling, rigging, animation, simulation, rendering, and even video editing which is what this guide will focus on. For our purposes, Blender is actually excessively heavy and confusing at points - if you have access to other editing software that supports compositing image sequences, such as Adobe Premiere or Adobe After Effects, you could also use those. Although, Blender is already installed on the lab machines; if you would like, it is also available to download for free. This guide will go through the basics for creating your animation - we also suggest looking up Blender video-editing tutorials online for more details.

First, set up the settings for the video you're creating:

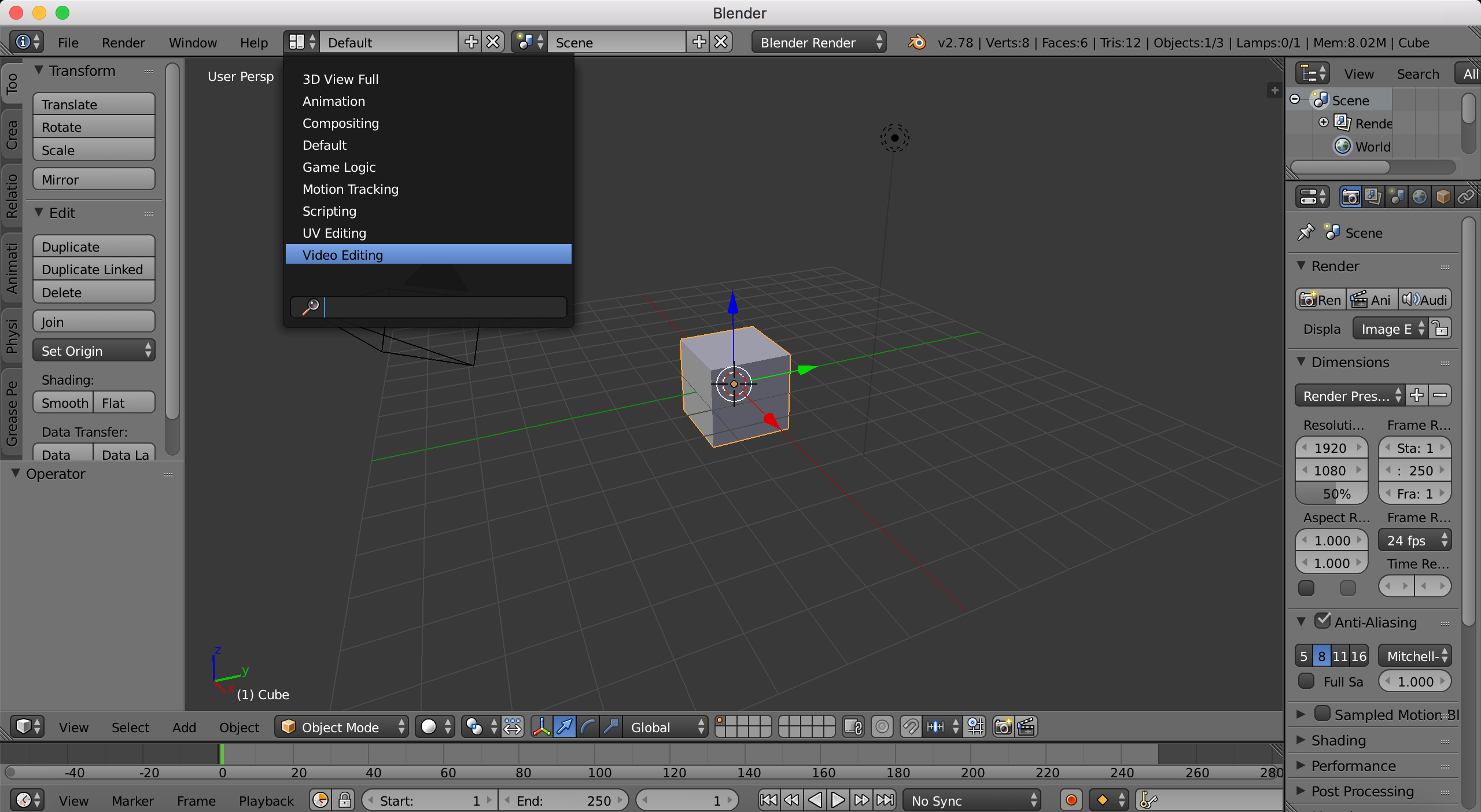

- At the top dropdown where it says 'Default', click and select 'Video Editing' to change the window layout.

- In the middle menubar, click on the icon to the left of 'View' and select 'Properties'. This chooses what content to fill the window usually above the toolbar with.

- Under 'Dimensions', set the 'Resolution' to your desired values. Make sure the percentage is still '100%'. Animator cameras output 1280 x 720 by default.

- Change the start frame and end frame number to match your desired length (or you can come back and do this later). Also change your framerate to the same frames-per-second value you used inside Animator (by default, 30fps).

- Then scroll down to 'Output' and set the folder you want to save your final movie in.

- Change the file format from 'PNG' to 'H.264'. Then go to 'Encoding' and change the format to 'MPEG-4'. 'Codec' should still be 'H.264'.

- Lastly, if you would like to add audio to your video, choose 'AAC' for your 'Audio codec'.

Next, add your shots as image sequences to your timeline:

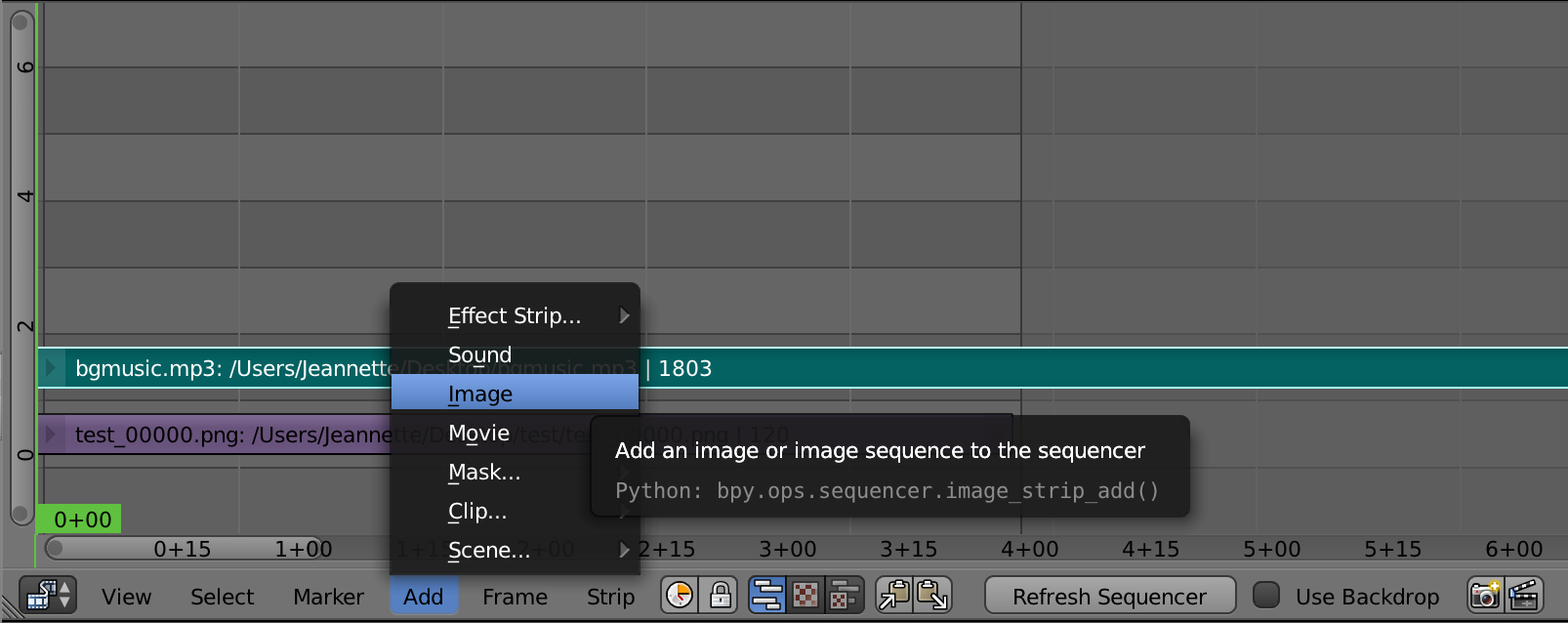

- On the timeline menubar near the bottom of your screen, select Add->Image.

- Navigate to the folder that contains the still-frames you exported from Animator. Press A to select all files then click 'Add Image Strip'.

- Your clip should now be visible in the timeline. Repeat for as many shots as you've created out of Animator.

- Right-click and drag on the clip to start moving and rearranging them - click again to lock it back into the timeline.

- You'll notice that the timeline has different tracks vertically. You can overlay shots and use effects to composite them into the same time position, or put sound on another track and time it to match the below video.

- Delete strips by going to Strip->Erase Strips or by having the clip selected and pressing Delete (Fn + Delete on macs).

- Play your animation by pressing Space then selecting 'Play Animation', or by selecting the movie clapboard icon on the far right of the timeline menubar. Press Escape or the button with the red x 'Anim Player' to stop playback.

- Use Add->Sound on the timeline menubar if you want sound in your animation.

When you're ready, export your animation:

- Scroll to the top of the Properties window in the top left again and under Render, press 'Animation'.

- You should be able to see each frame get rendered out. Go to the output folder you set and check that it plays and has sound if you added any.

- If there are any parts of your animation you're unhappy about, go back and keep editing! Remember to save your project by opening an 'Info' menubar if there isn't one yet, then go to File->Save As to save a .blend project.