Project 2 : Modeler

Assigned: Friday, Oct 22

Due: Wednesday, Nov 3 by 11:59 pm

Artifact Due: Monday, Nov 8

Help Sessions (Graphics lab, Sieg 327):

Friday, Oct 22 - 2:30 and 3:30 PM

Project TA: Jeffrey Booth

Assigned: Friday, Oct 22

Due: Wednesday, Nov 3 by 11:59 pm

Artifact Due: Monday, Nov 8

Help Sessions (Graphics lab, Sieg 327):

Friday, Oct 22 - 2:30 and 3:30 PM

Project TA: Jeffrey Booth

To get going, you need to get the skeleton source code. This is distributed via SVN, which is all set up for you. In the labs, we will be using TortoiseSVN. In order to get the source code, follow the directions below:

file://\\ntdfs/cs/unix/projects/instr/CurrentQtr/cse457/modeler/YOUR_ASSIGNED_GROUP_NAME/source".

When copying this string (then editing the group name), be careful that the

"\"s and "/"s are preserved exactly as they appear on this page.svn+ssh://YOUR_NET_ID@attu.cs.washington.edu/projects/instr/CurrentQtr/cse457/modeler/YOUR_ASSIGNED_GROUP_NAME/sourceIf you plan to work from home, you will either need to use the FLTK Installer or point Visual Studio to the correct include and library directories for the FLTK that came in your repository (since normally it points to the local one on each lab machine). Instructions for how to do this. To open and build the project, double-click modeler.vcproj.

A hierarchical model is a way of grouping together shapes and attributes to form a complex object. Parts of the object are positioned relative to each other instead of the world. Think of each object as a tree, with nodes decreasing in complexity as you move from root to leaf. Each node can be treated as a single object, so that when you modify a node you end up modifying all its children together. Hierarchical modeling is a very common way to structure 3D scenes and objects, and is found in many other contexts. If hierarchical modeling is unclear to you, you are encouraged to look at the relevant lecture notes.

You must come up with a character that meets these requirements:

You will want to make a model to draw your character, by extending the Model class in model.h:

class MyModel : public Model {

public:

// Constructor for MyModel

MyModel() {

}

// Draws the model onscreen

void draw() {

// ... call your drawing functions here ...

}

};

The easiest way to get started is to just modify the existing Scene class in sample.cpp. It extends Model, and in the main() method at the bottom, an instance of it is given to ModelerUserInterface to be displayed onscreen. Later on, you can create your own Model subclass and add it to the Scene class, much like the PointLight and DirectionalLight Models are added. See sample.cpp for details.

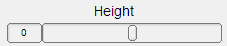

A property is an aspect of your model that the user can control. Modeler represents different types of properties with Property classes in model.h:

To add a property to your model class, do the following:

class MyModel : public Model {

Make sure protected: comes before properties, unless you want to be able to access them from other classes (in that case, use public:).

protected:

Add the property here:

RangeProperty height;

public:

void MyModel() :

put constructor here, after the above colon

height("Height", 0.0f, 10.0f, 0.0f, 0.1f)

{

Add the property to the model's GroupProperty, which is a group of properties that's a property of every Model. properties.add(&height);

}

void draw() {

Use your new property's value for something: glScalef(0, height.getValue(), 0);

}

};

Now, when you open Modeler, a control for your property appears on the left panel.

For further details on each property class, look in model.h. To see how they are added to models, look at the skeleton code's sample model in sample.cpp.

WARNING: If you try to set the value of a property of your model by calling setValue(), setRed(), etc., the slider, color picker, or checkbox corresponding to that property will not be updated to show that property's new value. This problem is an oversight in the design of the new Modeler, but will not prevent you from completing the project requirements. If you find yourself needing to set your model's properties, consult your TA's, because you might not be using the Model class the way it was intended to be used.

The graphics primitives you have (box, sphere, and cylinder) only accept parameters that define their dimensions. That means you can draw a box, sphere, or cylinder of any size, but it will always be at the origin with the same orientation. What if you want to move it somewhere else, or rotate it to a different orientation -- and you can't edit the primitive's drawing code?

In OpenGL, every vertex is multipled by the modelview matrix, then by the projection matrix:

Since Modeler takes care of projection, you'll modify the modelview matrix. OpenGL has several functions that create matrices for common transformations, then multiply them by the modelview matrix:

If you called glTranslatef(1, 0, 0), then called drawSphere(1), the sphere would be drawn centered around (1, 0, 0) because all of its vertices had 1 added to their X-coordinates. Each transformation has the effect of creating a "model space" with its own origin and axes. The sphere was drawn at the origin of a model space created by glTranslatef(1, 0, 0).

Once you have applied a transformation to the modelview matrix, it will be applied to EVERY point sent to the graphics card forever! To undo the transformation, you need to remember the matrix's contents.

Make sure you match each glPushMatrix() with a corresponding glPopMatrix(), so that your modelview matrix is returned to its original state. This makes it easy to "nest" your transformations:

// Here, modelview matrix is in world space

glPushMatrix(); // save world space matrix

// Still in world space

glTranslate(1, 0, 0);

// Now in model space (everything translated left by 1).

drawSphere(1);

// Here's a "nested" transformation. After the corresponding glPopMatrix(),

// we'll be back in model space.

glPushMatrix(); // save model space matrix

// Still in model space

glTranslate(3, 0, 0)

// Now in "model space 2"

drawSphere(1);

glPopMatrix(); // copy model space matrix into modelview matrix

// Back in model space

glPopMatrix(); // copy world space matrix into modelview matrix

// Back in world space

Each "level" of nesting counts as a level of hierarchy for this assignment if something is drawn at that level.

In OpenGL, all scenes are made of primitives like points, lines, triangles and quadrilaterals. Only the following primitives create triangles and quadrilaterals, which can be used to make 3D surfaces:

For each primitive, you send a list of vertices, which are the points that make up the primitive. The resulting shape depends on the primitive you chose. To send a vertex to the graphics hardware, you call glVertex3f(x, y, z). Here's an example that draws a 3D square:

glBegin(GL_QUADS); glVertex3f(0, 0, 0); glVertex3f(1, 0, 0); glVertex3f(1, 1, 0); glVertex3f(0, 1, 0); glEnd();

You can read more about OpenGL's primitives here.

However, these primitive aren't very sophisticated building blocks for scenes. So we'll invent more interesting primitives.

Replace an existing primitive with your own drawing code, or implement a new primitive. Your primitive must have texture mapping and per-vertex normals consistent with what you replaced. It must draw the surfaces by using glBegin() and glEnd(). You must replace at least one of them to meet this requirement, but will earn a whistle of extra credit for each additional one:

You may also, instead, implement a new shape from the bells-and-whistles list. In order to count for this requirement, the shape must support texture mapping and have sensible per-vertex normals. The shape must have approximate a form with some sort of curvature (such as a surface of revolution), so that your shape's vertex normals are not always perpendicular to their corresponding faces. In other words, shapes that only use flat shading (like a dodecahedron or icosahedron) cannot fulfill this requirement. A correct implementation will earn you all of the extra credit, minus a whistle, in addition to satisfying this requirement. Ask your TA's if you want to use an extra credit shape for this requirement, since not all extra credit shapes may fulfill it. If you don't want the required shape to be part of your final scene, create a switch to turn it on or off by using a BooleanProperty in your model or scene.

When you want to have Modeler load resources like textures for you, you create a subclass of Vault, and add Texture2D fields to it. At the top of sample.cpp is a class called SampleVault, which stores your model's textures and shaders. As you can see, the image "checkers.png" is loaded into a Texture2D field called texture:

/**

* This class contains your shaders and textures.

*/

class SampleVault : public Vault {

public:

Texture2D texture;

ShaderProgram shader;

/** Creates a new SampleVault */

SampleVault() : // after this colon go the constructors for your shaders

// and textures:

texture("checkers.png"),

shader("shader.vert", "shader.frag", NULL)

{}

};

As you can see from the skeleton code, there are two steps to adding a texture:

Now, when Modeler loads, it will create an instance of your Vault! If there are any errors loading any of the textures, Modeler will (hopefully) alert you.

When you want to access the textures in your Vault, you need to get a pointer to the Vault that Modeler loaded, and cast it to a SampleVault pointer so you can access its properties. Here's how:

SampleVault* vault = (SampleVault*) ModelerUserInterface::getInstance()->getVault();

NOTE: The draw() method in the skeleton code contains this line of code. Can you find it?!?

Now, if you made a Texture2D field called texture, you could tell OpenGL to use it like this:

vault->texture.use();

If you want to get the texture's ID for more advanced stuff:

GLuint textureID = vault->texture.getID();

If you want to use another texture, just call that texture object's use() method. To stop using textures, call:

glBindTexture(0);

Texture mapping allows you to "wrap" images around your model by mapping points on an image (called a texture) to vertices on your model. For each vertex, you indicate the coordinate that vertex should apply to, using the command glTexCoord2f(s, t), where s is the X-coordinate and t is the Y-coordinate of the point on the texture that should line up with the vertex. You call glTexCoord2f() right before the vertex you want to apply it to:

vault->texture.use(); glBegin(GL_TRIANGLES); ... glTexCoord2f(0, 0); glVertex3f(0, 0, 0); ... glEnd(); glBindTexture(0);

Surface normals are perpendicular to the plane that's tangent to a surface at a given vertex. Surface normals are used for lighting calculations, because they help determine how light reflects off of a surface. Each normal is sent by calling glNormal3f(x, y, z) before the call to glVertex3f(), much like texture mapping.

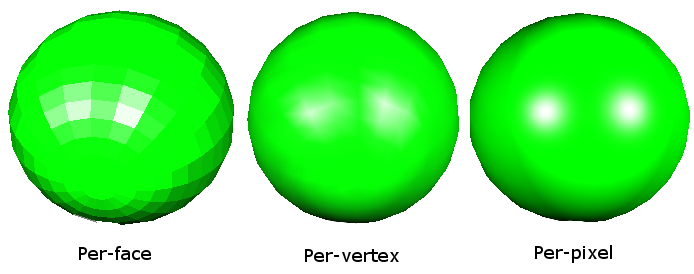

In OpenGL, we often want to approximate smooth shapes like spheres and cylinders using only triangles and quadrilaterals. One way to make the lighting look smooth is to use the normals from the shape we're trying to approximate, rather than just making them perpendicular to the polygons we draw. This means we calculate the normals for each vertex (per-vertex normals), rather than each face (per-face) normals. Shaders allow us to get even smoother lighting, calculating the normals at each pixel. You can compare these methods below:

In your custom primitive, you will need to use this trick: calculate your normals from the shape you're trying to model, rather than the polygons you're using to model it.

In 3D graphics, it's convenient to define a camera by indicating three vectors:

Unfortunately, in OpenGL, the camera is stuck at the origin, looking down the -z axis, with its up-vector aligned with the y axis. When the camera is here, we're in camera space. The camera must be here for projection to work properly, so we can't move the camera. Instead, we can create an affine transformation between world and camera space.

OpenGL contains a utility function called gluLookAt(), which takes these three vectors and creates an affine transformation that "moves" the entire scene such that the camera ends up at the origin, looking down the negative Z-axis, with its up-vector pointing along the positive Y-axis. It applies this transformation to the modelview matrix, so that the transformation gets applied to all subsequent vertices. The Camera class (camera.cpp) contains a method applyViewingTransform(), which uses gluLookAt() for the Modeler camera.

Replace the call to gluLookAt() in camera.cpp with your own code. You may choose to do this using glTranslate()s and glRotate()s. Or, even better, you can build the matrix yourself and use glMultMatrix() to apply the transformation.

A shader is a program that controls the behavior of a piece of the graphics pipeline on your graphics card.

Shaders determine how the scene lighting affects the coloring of 3D surfaces. In OpenGL, there are two basic kinds of lights:

A shading model determines how the final color of a surface is calculated from a scene's light sources and the object's material. We have provided a shader that uses the Blinn-Phong shading model for scenes with directional lights. See lecture notes for details on the Blinn-Phong shading model.

Add support for the scene's point light, by editing the files shader.vert and shader.frag. You need to include quadratic distance attenuation. Please open these files and read the comments for details. NOTE: You will need to CLAMP your attenuation factor to a value between 0 and 1 (inclusive) in order to match the sample solution.

We have provided the sample solution modeler.exe in your repository, which lets you compare your shader to the (correct) sample solution shader. To choose which shader to show, click on "Scene" and check the box for the shader you want to use. The sample solution also contains both a point light and a directional light for you to manipulate. Click the "Point Light" or "Directional Light" on the list in the top left corner to edit a light's properties or move it around.

Create another shader that is worth at least 1 whistle on the chart below. Any additional bells or whistles will be extra credit. You must keep your point light Blinn-Phong shader from Part 4 separate, so we can grade it separately. Consult the OpenGL orange book for some excellent tips on shaders. Ask your TA's if you would like to implement a shader that isn't listed below. Credit for any shader may be adjusted depending on the quality of the implementation, but any reasonable effort should at least satisfy the requirement.

Cartoon Shader - Create a shader that produces a cartoon effect by drawing a limited set of colors. This was downgraded to a bell due to its simplicity.

Cartoon Shader - Create a shader that produces a cartoon effect by drawing a limited set of colors. This was downgraded to a bell due to its simplicity.

Blinn-Phong and Texture Mapping Shader - Extend your Blinn-Phong shader to support texture mapping. Remember to keep a copy of your original Blinn-Phong shader for grading. This was upgraded to a bell because you have to do both texture mapping and Blinn-Phong shading.

Blinn-Phong and Texture Mapping Shader - Extend your Blinn-Phong shader to support texture mapping. Remember to keep a copy of your original Blinn-Phong shader for grading. This was upgraded to a bell because you have to do both texture mapping and Blinn-Phong shading.

Tessellated Procedural Shader - Make a shader that produces an interesting, repeating pattern, such as a brick pattern, without using a texture.

Tessellated Procedural Shader - Make a shader that produces an interesting, repeating pattern, such as a brick pattern, without using a texture.

Bump Mapping Shader - This shader uses a texture to perturb the surface normals of a surface to create the illusion of tiny bumps, without introducing additional geometry.

Bump Mapping Shader - This shader uses a texture to perturb the surface normals of a surface to create the illusion of tiny bumps, without introducing additional geometry.

Environment Mapped Shader - To make an object appear really shiny (i.e. metallic), it needs to reflect the objects around it. One way to do this is to take a panoramic picture of the surroundings, store it in a texture, and use that texture to determine what should be reflected. For simplicity, we recommend obtaining an existing environment map from somewhere (perhaps making it yourself with a 3D raytracer).

Environment Mapped Shader - To make an object appear really shiny (i.e. metallic), it needs to reflect the objects around it. One way to do this is to take a panoramic picture of the surroundings, store it in a texture, and use that texture to determine what should be reflected. For simplicity, we recommend obtaining an existing environment map from somewhere (perhaps making it yourself with a 3D raytracer).

Cloud / Noise Shader - Create a shader that uses noise functions (like Perlin noise) to generate clouds. You may not use textures for this shader. Credit depends on the realism of the clouds.

Cloud / Noise Shader - Create a shader that uses noise functions (like Perlin noise) to generate clouds. You may not use textures for this shader. Credit depends on the realism of the clouds.

You can use the sample solution Modeler to develop some of these shaders, but others require texture maps to be provided -- which the sample solution may not provide to your shader.

Your Vault subclass, SampleVault, can also load shaders for you! Shader files are loaded, compiled, and linked by ShaderProgram objects. If you want to add a shader:

The ShaderProgram constructor takes three filenames:

/**

* This class contains your shaders and textures.

*/

class SampleVault : public Vault {

public:

Texture2D texture;

ShaderProgram shader;

/** Creates a new SampleVault */

SampleVault() : // after this colon go the constructors for your shaders

// and textures:

texture("checkers.png"),

shader("shader.vert", "shader.frag", NULL)

{}

};

If you have an error in your shader code, YOU DON'T HAVE TO RESTART MODELER! Instead, fix your shader, then go to File->Reload Textures And Shaders.

When you want to access the shaders in your Vault, you need to get a pointer to the Vault that Modeler loaded, and cast it to a SampleVault pointer so you can access its properties. Here's how:

SampleVault* vault = (SampleVault*) ModelerUserInterface::getInstance()->getVault();

NOTE: The draw() method in the skeleton code contains this line of code. Can you find it?!?

Now, if you made a ShaderProgram field called shader, you could tell OpenGL to use it on your scene objects like this:

vault->shader.use();

If you want to get the shader program's ID for more advanced stuff:

GLuint shaderProgramID = vault->shader.getID();

If you want to use another shader, just call that shader object's use() method. To stop using shaders, call:

glBindTexture(0);

You can access the turnin folder on Windows by going to your O: drive in My Computer and navigating to cs\unix\projects\instr\CurrentQtr\cse457\modeler\YOUR-GROUP-NAME\turnin .

If you want to turn your files in from home, download an SSH File Transfer client like FileZilla, which is free and available for Windows, Linux, and Mac.

The modeler application itself is the artifact. Create a zip file with exactly what you need to run your executable, i.e. the binary (not source) plus any textures or shaders you may have used. The idea is to have it run out-of-the-box when unzipped. Take a screenshot using File->Save Image... from inside your app. Turn in the zip file and screenshot to the artifact folder using this naming scheme:

<group-folder-name>.jpg or <group-folder-name>.png <group-folder-name>.zip

Two whistles are worth one bell, and one bell is worth one point on the project.

Come up with another whistle and implement it. A whistle is something that extends the use of one of the things you are already doing. It is part of the basic model construction, but extended or cloned and modified in an interesting way. Ask your TAs to make sure this whistle is valid.

Come up with another whistle and implement it. A whistle is something that extends the use of one of the things you are already doing. It is part of the basic model construction, but extended or cloned and modified in an interesting way. Ask your TAs to make sure this whistle is valid.

Build a complex shape as a set of polygonal faces, using triangles (either the provided primitive or straight OpenGL triangles) to render it.

Examples of things that don't count as complex: a pentagon, a square, a circle. Examples of what does count: dodecahedron, 2D function plot (z = sin(x2 + y)), etc.

Build a complex shape as a set of polygonal faces, using triangles (either the provided primitive or straight OpenGL triangles) to render it.

Examples of things that don't count as complex: a pentagon, a square, a circle. Examples of what does count: dodecahedron, 2D function plot (z = sin(x2 + y)), etc.

On the Modeler menu bar, there is an Animate menu. When you click it and check the "Enable" box, your model's draw() method will get called about 50 times per second. You can use this fact to implement an animation. You can keep track of time by incrementing a counter every time your draw() method is called.

On the Modeler menu bar, there is an Animate menu. When you click it and check the "Enable" box, your model's draw() method will get called about 50 times per second. You can use this fact to implement an animation. You can keep track of time by incrementing a counter every time your draw() method is called.

Add some widgets that control adjustable parameters to your model so that you can create individual-looking instances of your character.

Try to make these actually different individuals, not just "the red guy" and "the blue guy."

Add some widgets that control adjustable parameters to your model so that you can create individual-looking instances of your character.

Try to make these actually different individuals, not just "the red guy" and "the blue guy."

A display list is a "recording" of OpenGL calls that gets stored on the graphics card. Thus, display lists allow you to render complicated polygons much more quickly because you only have to tell the graphics card to replay the list of commands instead of sending them across the (slow) computer bus. A display list tutorial can be found here.

A display list is a "recording" of OpenGL calls that gets stored on the graphics card. Thus, display lists allow you to render complicated polygons much more quickly because you only have to tell the graphics card to replay the list of commands instead of sending them across the (slow) computer bus. A display list tutorial can be found here.

Implement a smooth curve functionality. Examples of smooth curves are here.

These curves are a great way to lead into swept surfaces (see below). Functional curves will need to be demonstrated in some way. One great example would be to draw some polynomial across a curve that you define.

Students who implement swept surfaces will not be given a bell for smooth curves. That bell will be included in the swept surfaces bell. Smooth curves will be an important part of the animator project, so this will give you a leg up on that.

Implement a smooth curve functionality. Examples of smooth curves are here.

These curves are a great way to lead into swept surfaces (see below). Functional curves will need to be demonstrated in some way. One great example would be to draw some polynomial across a curve that you define.

Students who implement swept surfaces will not be given a bell for smooth curves. That bell will be included in the swept surfaces bell. Smooth curves will be an important part of the animator project, so this will give you a leg up on that.

Implement one or more non-linear transformations applied to a triangle mesh. This entails creating at least one function that is applied across a mesh with specified parameters. For example, you could generate a triangulated sphere and apply a function to a sphere at a specified point that modifies the mesh based on the distance of each point from a given axis or origin. Credit varies depending on the complexity of the transformation(s) and/or whether you provide user controls (e.g., sliders) to modify parameters.

Implement one or more non-linear transformations applied to a triangle mesh. This entails creating at least one function that is applied across a mesh with specified parameters. For example, you could generate a triangulated sphere and apply a function to a sphere at a specified point that modifies the mesh based on the distance of each point from a given axis or origin. Credit varies depending on the complexity of the transformation(s) and/or whether you provide user controls (e.g., sliders) to modify parameters.

Implement the "Hitchcock Effect" described in class, where the camera zooms in on an object, whilst at the same time pulling away from it (the effect can also be reversed--zoom out and pull in).

The transformation should fix one plane in the scene--show this plane. Make sure that the effect is dramatic--adding an interesting background will help, otherwise it can be really difficult to tell if it's being done correctly.

Implement the "Hitchcock Effect" described in class, where the camera zooms in on an object, whilst at the same time pulling away from it (the effect can also be reversed--zoom out and pull in).

The transformation should fix one plane in the scene--show this plane. Make sure that the effect is dramatic--adding an interesting background will help, otherwise it can be really difficult to tell if it's being done correctly.

Heightfields are great ways to build complicated looking maps and terrains pretty easily.

Heightfields are great ways to build complicated looking maps and terrains pretty easily.

Add a function in your model file for drawing a new type of primitive. The following examples will definitely garner two bells; if you come up with your own primitive, you will be awarded one or two bells based on its coolness.

Here are three examples:

Add a function in your model file for drawing a new type of primitive. The following examples will definitely garner two bells; if you come up with your own primitive, you will be awarded one or two bells based on its coolness.

Here are three examples:

(Variable) Use some sort of procedural modeling (such as an L-system) to generate all or part of your character.

Have parameters of the procedural modeler controllable by the user via control widgets.

In a previous quarter, one group generated these awesome results.

(Variable) Use some sort of procedural modeling (such as an L-system) to generate all or part of your character.

Have parameters of the procedural modeler controllable by the user via control widgets.

In a previous quarter, one group generated these awesome results.

In addition to mood cycling, have your character react differently to UI controls depending on what mood they are in.

Again, there is some weight in this item because the character reactions are supposed to make sense in a story telling way.

Think about the mood that the character is in, think about the things that you might want the character to do, and then provide a means for expressing and controlling those actions.

In addition to mood cycling, have your character react differently to UI controls depending on what mood they are in.

Again, there is some weight in this item because the character reactions are supposed to make sense in a story telling way.

Think about the mood that the character is in, think about the things that you might want the character to do, and then provide a means for expressing and controlling those actions.

One difficulty with hierarchical modeling using primitives is the difficulty of building "organic" shapes.

It's difficult, for instance, to make a convincing looking human arm because you can't really show the bending of the skin and bulging of the muscle using cylinders and spheres.

There has, however, been success in building organic shapes using metaballs.

Implement your hierarchical model and "skin" it with metaballs. Hint: look up "marching cubes" and "marching tetrahedra" --these are two commonly used algorithms for volume rendering.

For an additional bell, the placement of the metaballs should depend on some sort of interactically controllable hierarchy.

Try out a demo application.

One difficulty with hierarchical modeling using primitives is the difficulty of building "organic" shapes.

It's difficult, for instance, to make a convincing looking human arm because you can't really show the bending of the skin and bulging of the muscle using cylinders and spheres.

There has, however, been success in building organic shapes using metaballs.

Implement your hierarchical model and "skin" it with metaballs. Hint: look up "marching cubes" and "marching tetrahedra" --these are two commonly used algorithms for volume rendering.

For an additional bell, the placement of the metaballs should depend on some sort of interactically controllable hierarchy.

Try out a demo application.

Metaball Demos:These demos show the use of metaballs within the modeler framework. The first demo allows you to play around with three metaballs just to see how they interact with one another. The second demo shows an application of metaballs to create a twisting snake-like tube. Both these demos were created using the metaball implementation from a past CSE 457 student's project.

Demo 1: Basic Texture Mapped Metaballs

Demo 2: Cool Metaball Snake

If you have a sufficiently complex model, you'll soon realize what a pain it is to have to play with all the sliders to pose your character correctly. Implement a method of adjusting the joint angles, etc., directly though the viewport.

For instance, clicking on the shoulder of a human model might select it and activate a sphere around the joint. Click-dragging the sphere then should rotate the shoulder joint intuitively.

For the elbow joint, however, a sphere would be quite unintuitive, as the elbow can only rotate about one axis. For ideas, you may want to play with the Maya 3D modeling/animation package, which is installed on the workstations in 228.

Credit depends on quality of implementation.

If you have a sufficiently complex model, you'll soon realize what a pain it is to have to play with all the sliders to pose your character correctly. Implement a method of adjusting the joint angles, etc., directly though the viewport.

For instance, clicking on the shoulder of a human model might select it and activate a sphere around the joint. Click-dragging the sphere then should rotate the shoulder joint intuitively.

For the elbow joint, however, a sphere would be quite unintuitive, as the elbow can only rotate about one axis. For ideas, you may want to play with the Maya 3D modeling/animation package, which is installed on the workstations in 228.

Credit depends on quality of implementation.

Another method to build organic shapes is subdivision surfaces.

Implement these for use in your model. You may want to visit this to get some starter code.

Another method to build organic shapes is subdivision surfaces.

Implement these for use in your model. You may want to visit this to get some starter code.

The hierarchical model that you created is controlled by forward kinematics; that is, the positions of the parts vary as a function of joint angles. More mathematically stated, the positions of the joints are computed as a function of the degrees of freedom (these DOFs are most often rotations). The problem of inverse kinematics is to determine the DOFs of a model to satisfy a set of positional constraints, subject to the DOF constraints of the model (a knee on a human model, for instance, should not bend backwards).

This is a significantly harder problem than forward kinematics. Aside from the complicated math involved, many inverse kinematics problems do not have unique solutions. Imagine a human model, with the feet constrained to the ground. Now we wish to place the hand, say, about five feet off the ground. We need to figure out the value of every joint angle in the body to achieve the desired pose. Clearly, there are an infinite number of solutions. Which one is "best"?

Now imagine that we wish to place the hand 15 feet off the ground. It's fairly unlikely that a realistic human model can do this with its feet still planted on the ground. But inverse kinematics must provide a good solution anyway. How is a good solution defined?

Your solver should be fully general and not rely on your specific model (although you can assume that the degrees of freedom are all rotational). Additionally, you should modify your user interface to allow interactive control of your model though the inverse kinematics solver. The solver should run quickly enough to respond to mouse movement.

If you're interested in implementing this, you will probably want to consult the CSE558 lecture notes.

The primitives that you are using in your model are all built from simple two dimensional polygons. That's how most everything is handled in the OpenGL graphics world. Everything ends up getting reduced to triangles.

Building a highly detailed polygonal model often requires millions of triangles. This can be a huge burden on the graphics hardware. One approach to alleviating this problem is to draw the model using varying levels of detail. In the modeler application, this can be done by specifying the quality (poor, low, medium, high). This unfortunately is a fairly hacky solution to a more general problem.

First, implement a method for controlling the level of detail of an arbitrary polygonal model. You will probably want to devise some way of representing the model in a file. Ideally, you should not need to load the entire file into memory if you're drawing a low-detail representation.

Now the question arises: how much detail do we need to make a visually nice image? This depends on a lot of factors. Farther objects can be drawn with fewer polygons, since they're smaller on screen. See Hugues Hoppe's work on View-dependent refinement of progressive meshes for some cool demos of this. Implement this or a similar method, making sure that your user interface supplies enough information to demonstrate the benefits of using your method. There are many other criteria to consider that you may want to use, such as lighting and shading (dark objects require less detail than light ones; objects with matte finishes require less detail than shiny objects).

Many 3D models come in the form of static polygon meshes. That is, all the geometry is there, but there is no inherent hierarchy. These models may come from various sources, for instance 3D scans. Implement a system to easily give the model some sort of hierarchical structure. This may be through the user interface, or perhaps by fitting an model with a known hierarchical structure to the polygon mesh (see this for one way you might do this). If you choose to have a manual user interface, it should be very intuitive.

Through your implementation, you should be able to specify how the deformations at the joints should be done. On a model of a human, for instance, a bending elbow should result in the appropriate deformation of the mesh around the elbow (and, if you're really ambitious, some bulging in the biceps).