where the summation is over all pixels p in the window. The weights

In this component, you will write code to detect discriminating features in an image and find the best matching features in other images. Because features should be reasonably invariant to translation, rotation (plus illumination and scale if you do the extra credit), you'll use a feature descriptor discussed during lecture and you'll evaluate its performance on a suite of benchmark images. As part of the extra credit you'll have the option of creating your own feature descriptors. If there are enough entries we'll rank the performance of features that students in the class come up with, and compare them with the current state-of-the-art.

For the second part of the assignment, you will apply your features to automatically stitch images into a panorama.

To help you visualize the results and debug your program, we provide a working user interface that displays detected features and best matches in other images. We also provide sample feature files that were generated using SIFT, the current best of breed technique in the vision community, for comparison.

This component has three parts: feature detection, description, and matching..

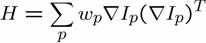

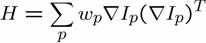

In this step, you will identify points of interest in the image using the Harris corner detection method. The steps are as follows (see the lecture slides/readings for more details) For each point in the image, consider a window of pixels around that point. Compute the Harris matrix H for that point, defined as

where

the summation is over all

pixels p in the window. The weights

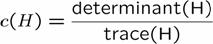

Note that H is a 2x2 matrix. To find interest points, first compute the corner strength function (the "Harris operator")

Once you've computed c for every point in the image, choose points where c is above a threshold. You also want c to be a local maximum in at least a 3x3 neighborhood.

Now that you've identified points of interest, the next step is to come up with a descriptor for the feature centered at each interest point. This descriptor will be the representation you'll use to compare features in different images to see if they match. You'll create the descriptor using the following steps in the warpComputeFeatures function in the code skeleton (also see class notes):

1. Prefilter the image using the 7x7 Gaussian filter. You may find the ImageLib "Convolve" function (in Convolve.h) useful here.

2. Determine the homography (rotation, translation and scale) required to warp an 8x8 image to a rotated 40x40 window centered at the feature.

3. Use the WarpGlobal function to acquire the values of the 8x8 downsampled image.

4. Update the feature descriptor with this data.

void WarpGlobal(CImageOf<T> src, CImageOf<T>& dst, CTransform3x3 M, WarpInterpolationMode interp);

WarpGlobal performs an inverse warp of the source image into the destination image. In other words, for every pixel of the destination image, the pixel in source image is computed using the specified transformation (and interpolated, if required). The transformation is specified by a 3x3 homography matrix that can represent rigid, affine, or perspective transformations.

Now that you've detected and described your features, the next step is to write code to match them, i.e., given a feature in one image, find the best matching feature in one or more other images. This part of the feature detection and matching component is mainly designed to help you test out your feature descriptor. You will implement a more sophisticated feature matching mechanism in the second component when you do the actual image alignment for the panorama.

The simplest approach is the following: write a procedure that compares two features and outputs a score saying how well they match. For example, you could simply sum the absolute value of differences between the descriptor elements. Use this to compute the best match between one feature and a set of other features by evaluating the score for every candidate match. You can optionally explore faster matching algorithms for extra credit.

Your routine should return NULL if there is no good match in the other image(s). This requires that you make a binary decision as to whether a match is good or not. Implement two methods to solve this problem:

1. use a threshold on the match score

2. compute (score of the best feature match)/(score of the

second best feature match), and threshold that

Now you're ready to go! Using the UI and skeleton code that we provide, you can load in a set of images, view the detected features, and visualize the feature matches that your algorithm computes.

We are providing a set of benchmark images to be used to test the performance of your algorithm as a function of different types of controlled variation (i.e., rotation, scale, illumination, perspective, blurring). For each of these images, we know the correct transformation and can therefore measure the accuracy of each of your feature matches. This is done using a routine that we supply in the skeleton code.

You should also test the matching against the images you will take for your panorama (described in next component).

Follow these steps to get started quickly:

Download the skeleton code here.

Download some

image sets: graf, Yosemite.

Included with these images are some SIFT feature files and image

database files.

After compiling and linking the skeleton code, you will have an executable Features This can be run in several ways:

Features

with no command line options starts the GUI. Inside the GUI, you can

load a query image and its corresponding feature file, as well as an

image database file, and search the database for the image which best

matches the query features. You can use the mouse buttons to select a

subset of the features to use in the query.

Until you write your feature matching routine, the features are matched by minimizing the SSD distance between feature vectors.

Features computeFeatures imagefile featurefile

[featuretype]

uses your feature detection routine to compute the features for imagefile,

and writes them to featurefile. featuretype

specifies which of your types of features (if you choose to

implement another feature for extra credit) to compute.

Features matchFeatures featurefile1 featurefile2 threshold

matchfile [matchtype]

takes in two sets of features,

featurefile1 and featurefile2 and matches them using

your matching routine (the matching routine to use is selected by

[matchtype]; 1 (SSD) is the default). The threshold for determining

which matches to keep is given by threshold. The results are

written to a file, matchfile, which can later be read by the

Panorama program.

Features matchSIFTFeatures featurefile1 featurefile2 threshold

matchfile [matchtype]

same as above, but uses SIFT features.

We have given you a number of classes and methods to help get you started. The only code you need to write is for your feature detection methods and your feature matching methods, all in features.cpp. Then, you should modify computeFeatures and matchFeatures in the file features.cpp to call the methods you have written. We have provided a function dummyComputeFeatures that shows how to create the code to detect and describe features,as well as integrate it into the system. The function dummyMatchFeatures demonstrates a comparison and matching algorithm between features. The function warpComputeFeatures is the main function you will complete, along with the helper functions computeHarrisValues and computeLocalMaxima.

Here is a list of suggestions for extending the program for extra credit. You are encouraged to come up with your own extensions as well!