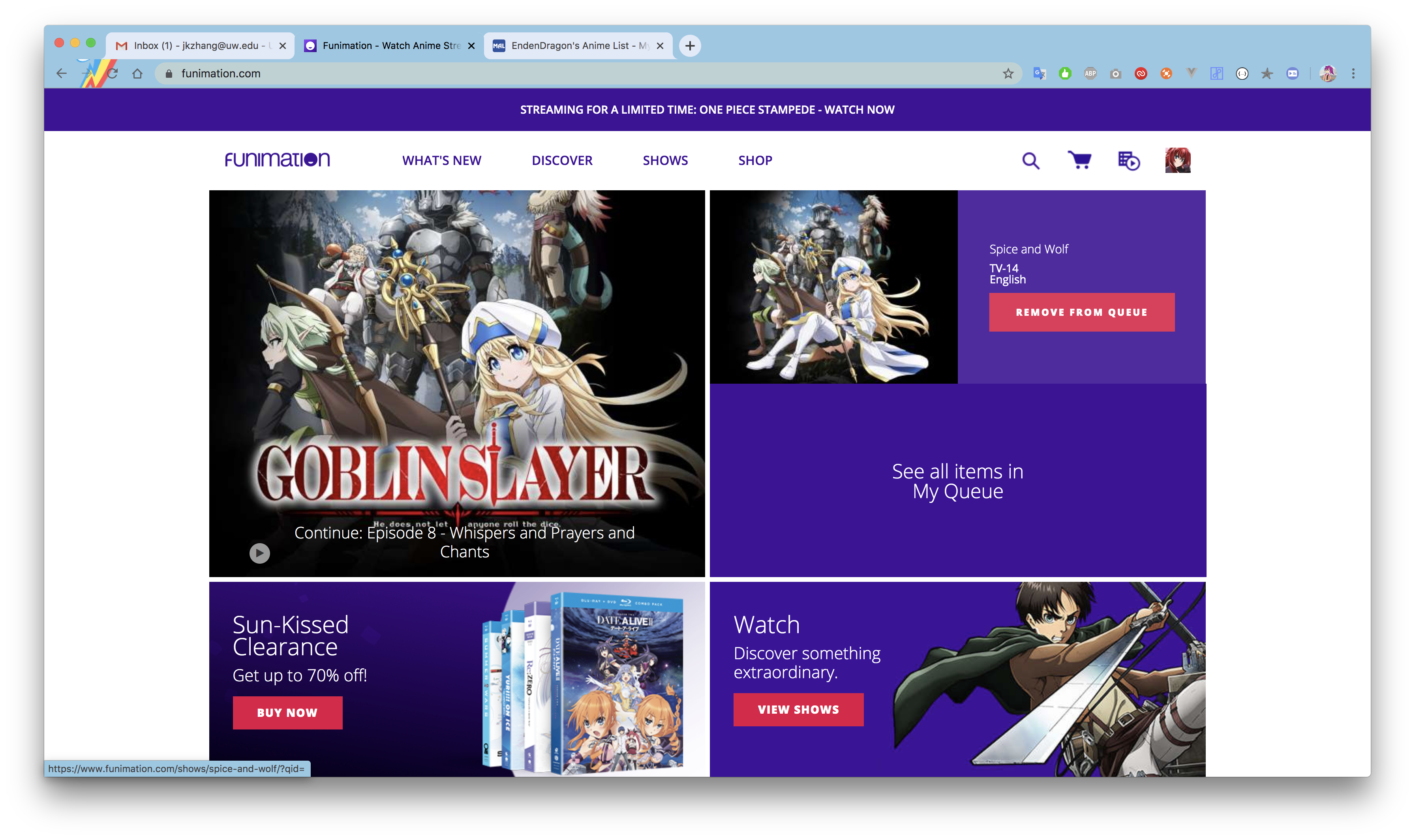

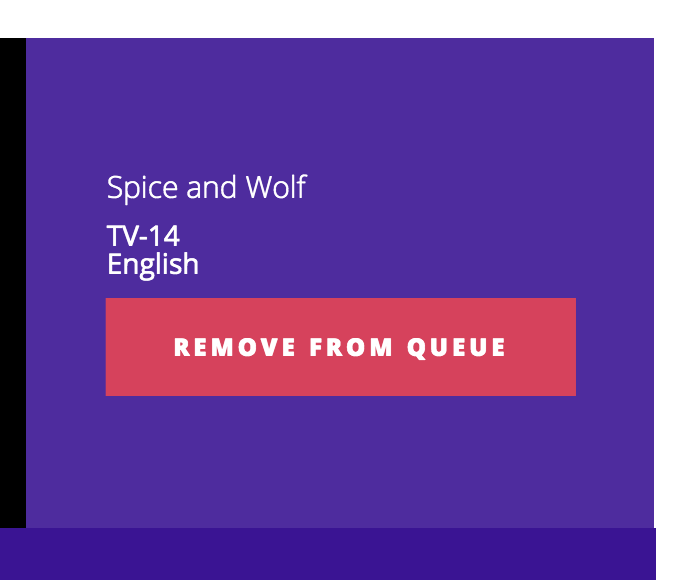

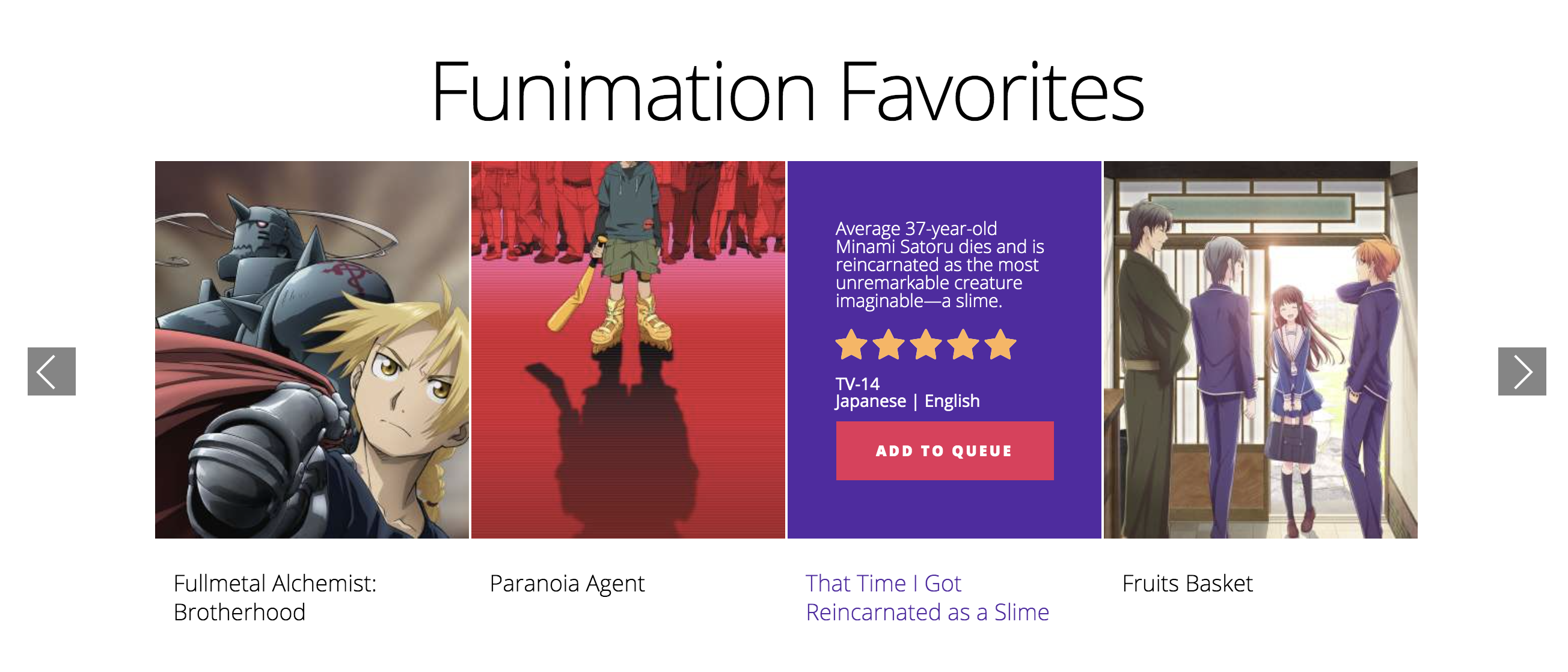

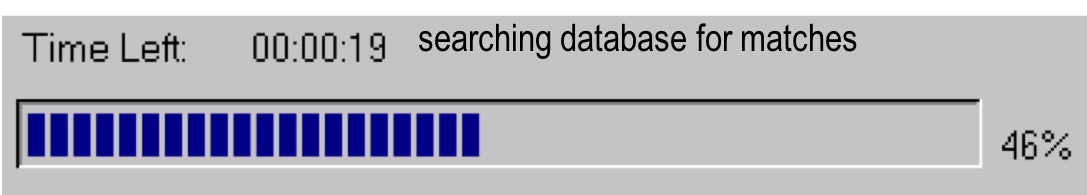

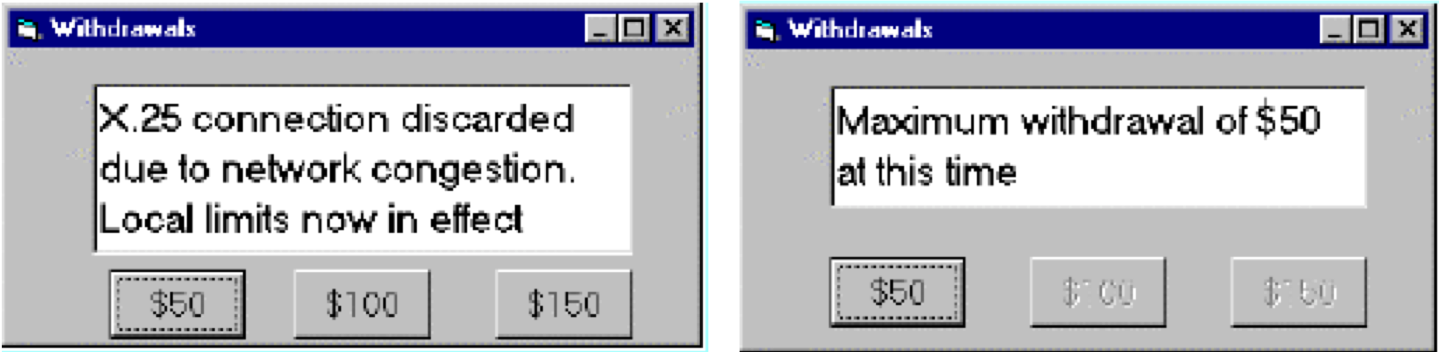

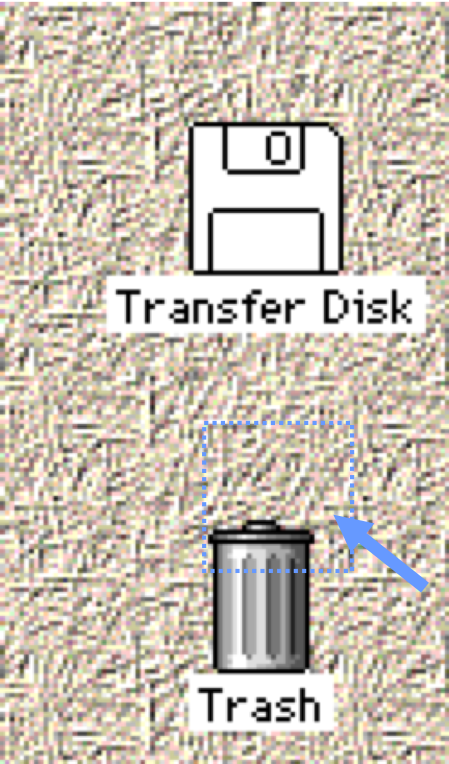

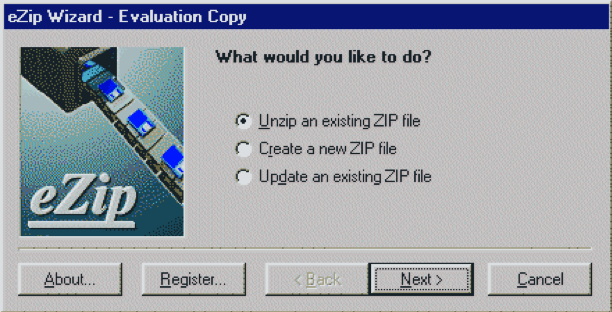

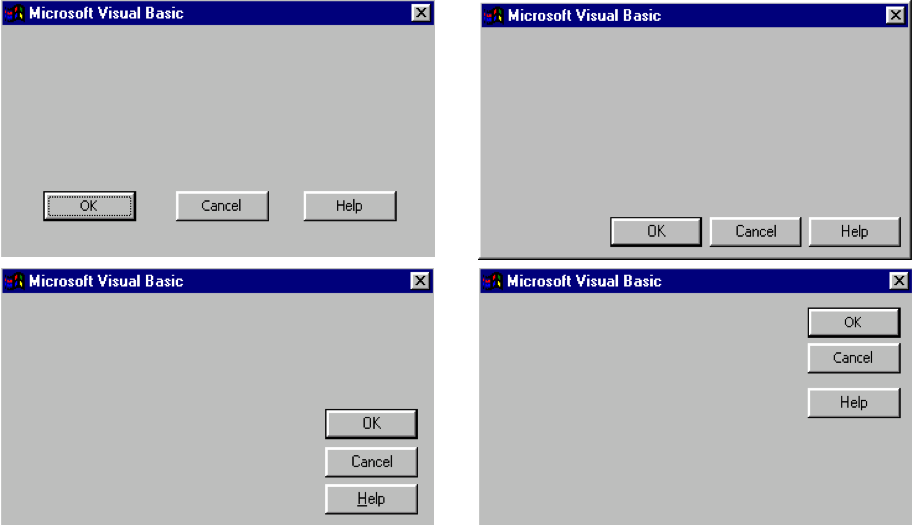

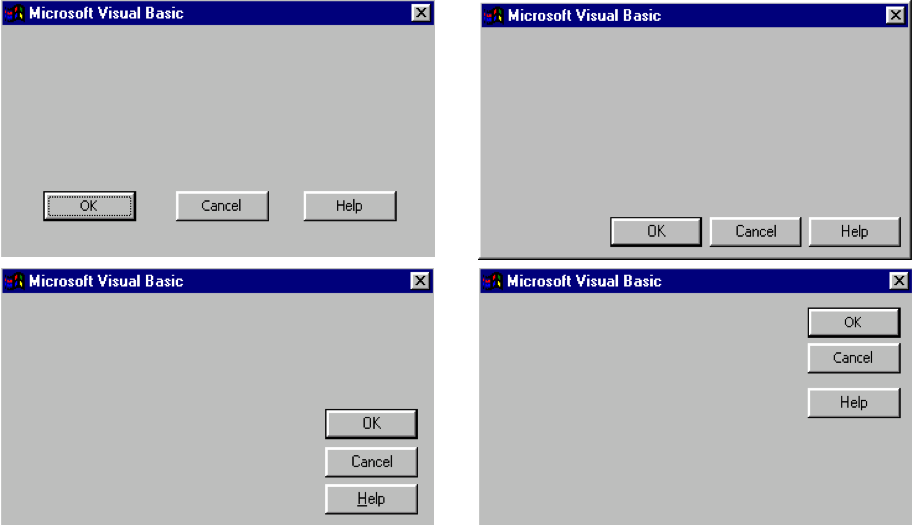

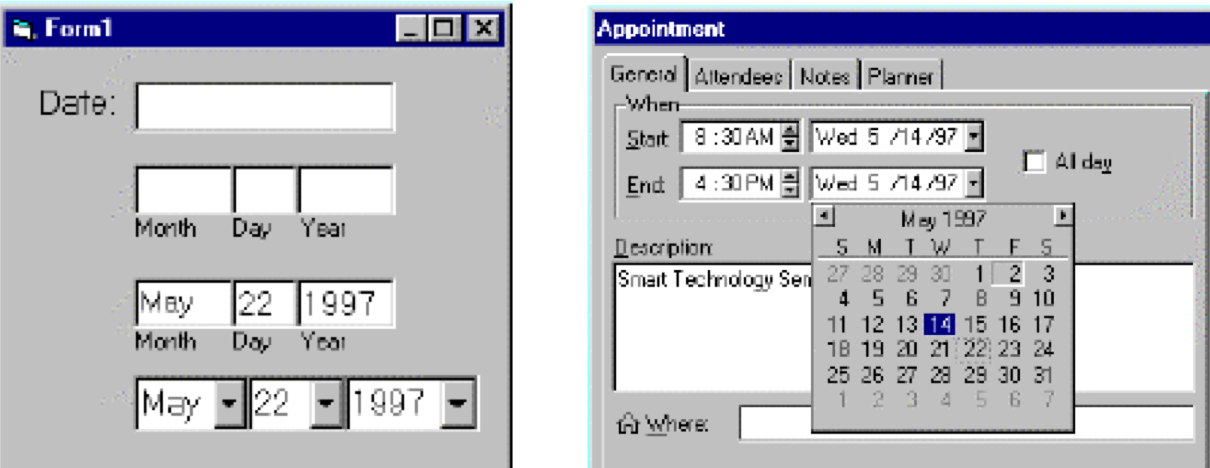

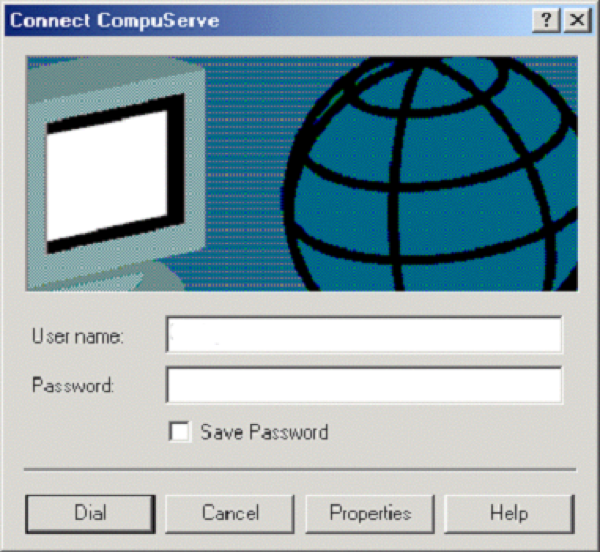

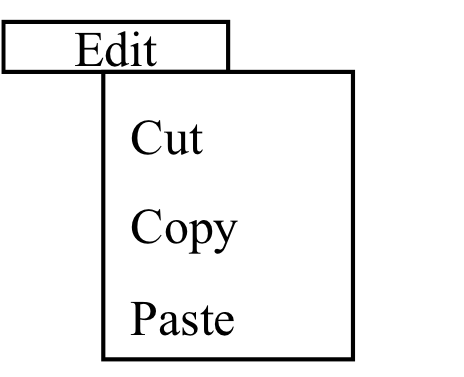

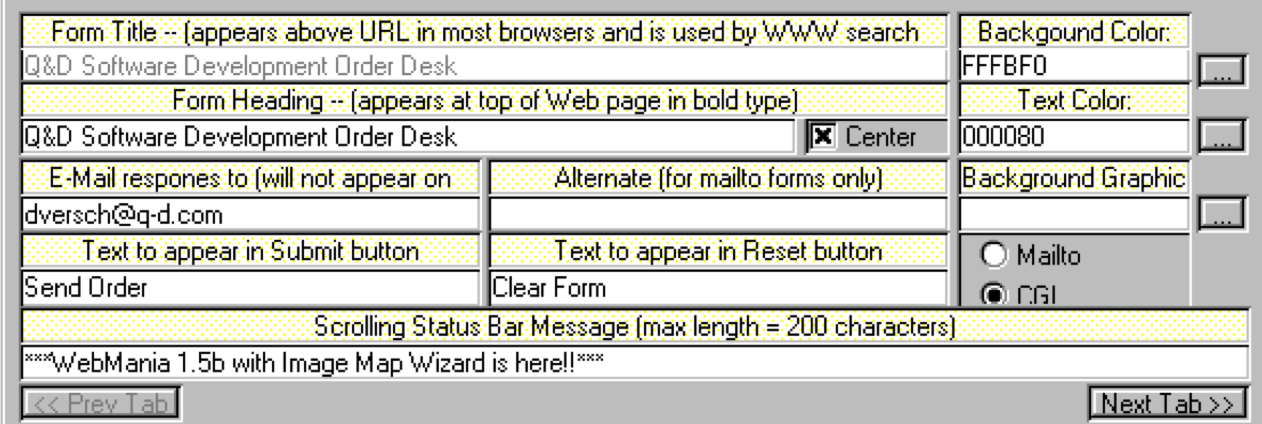

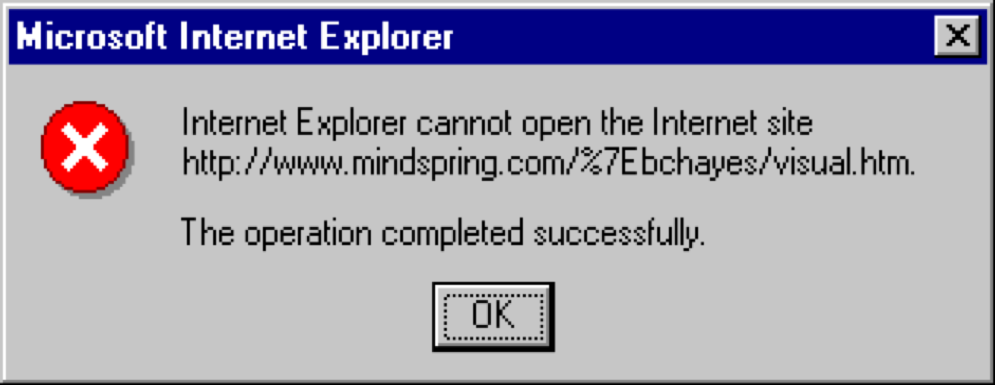

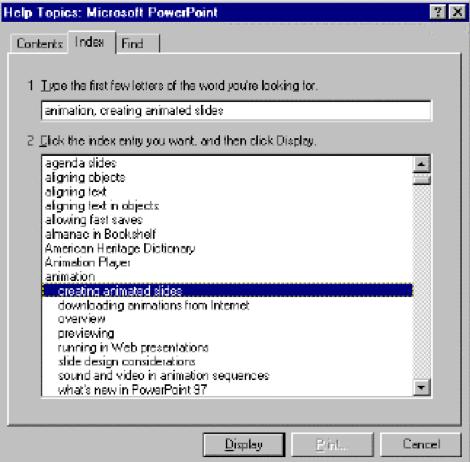

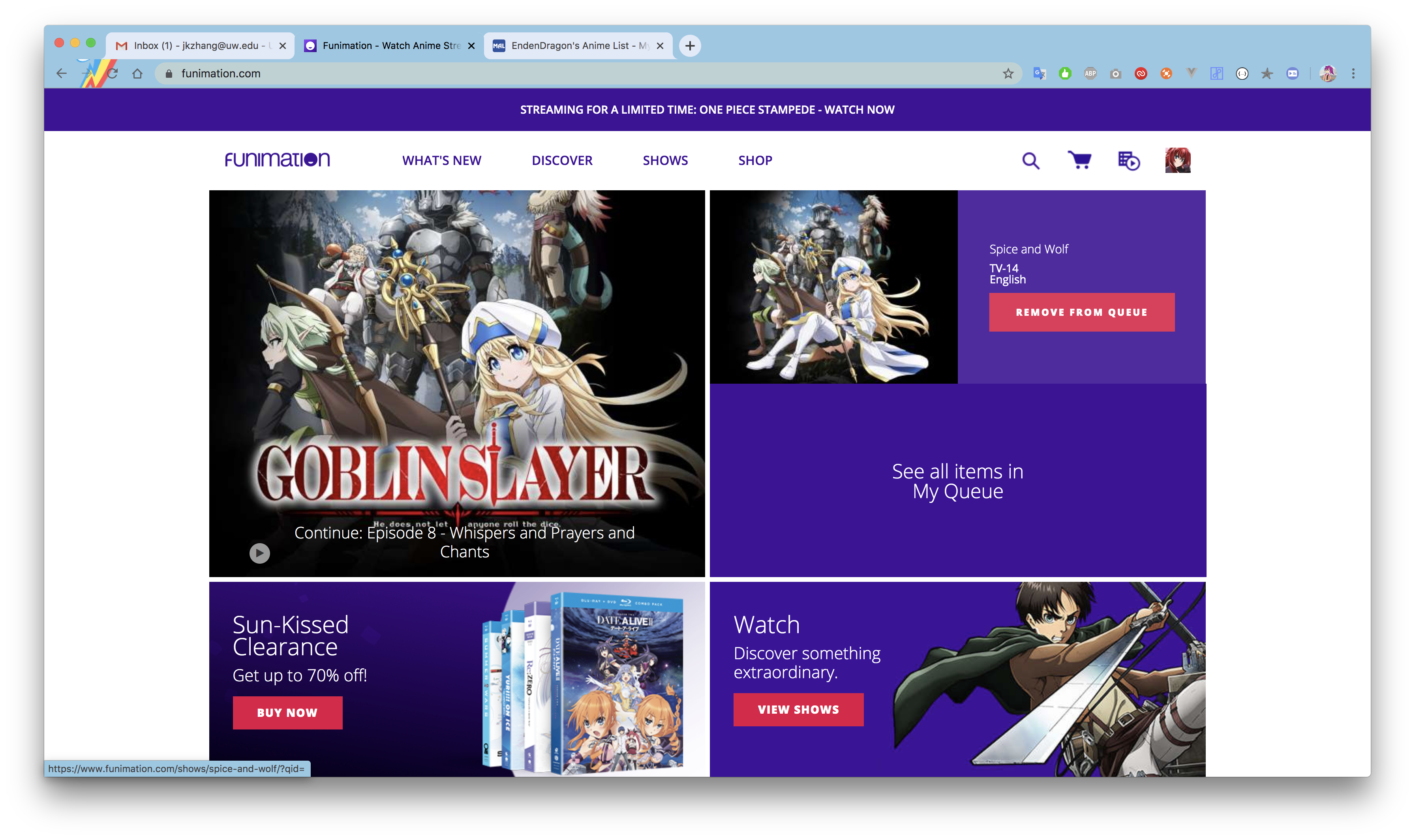

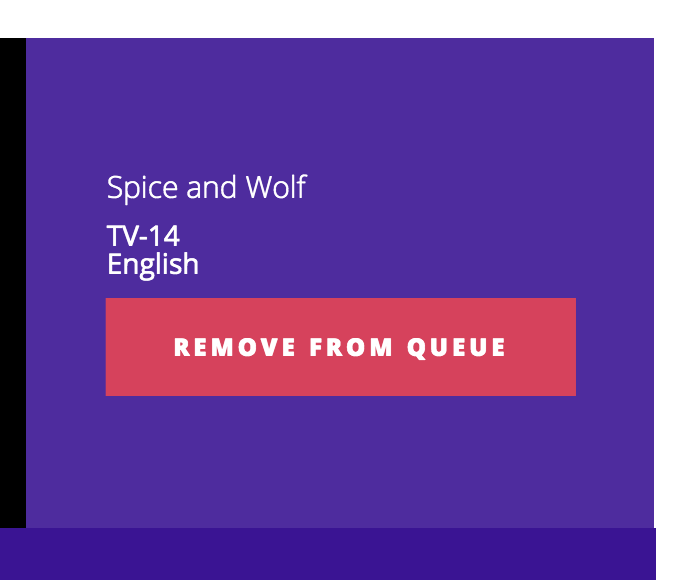

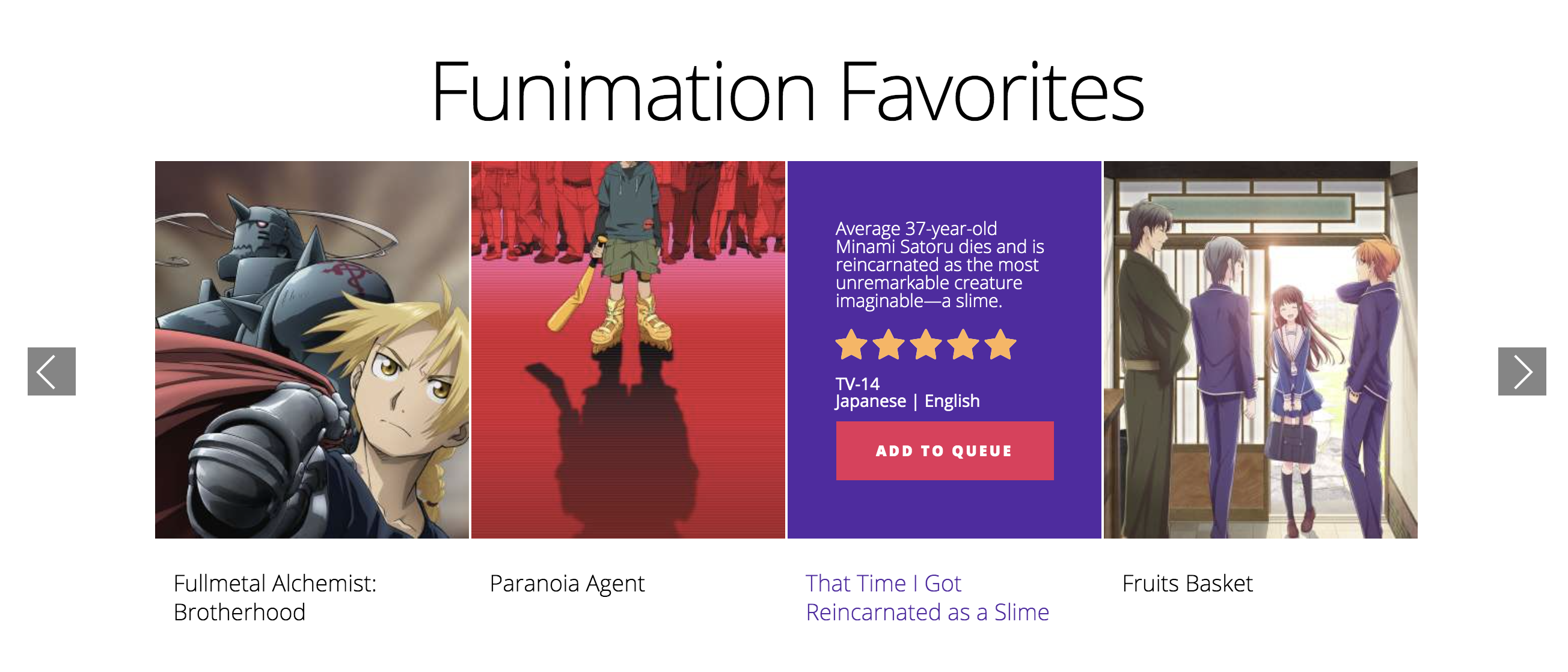

layout: false # Hall of Shame? What do you think happened here? .left-column50[  ] .right-column40[   ] ??? Thanks to Jeremy Zhang for the find. --- name: inverse layout: true class: center, middle, inverse # Heuristic Evaluation for Analyzing Interfaces Lauren Bricker CSE 340 Spring 2020 --- layout: false [//]: # (Outline Slide) # Today's goals - [Combined data from Menus](https://docs.google.com/spreadsheets/d/1neu_22-YTI3TsP5uHKMsiEs4yxxz4xtghk69bYIgoJo/edit?usp=sharing) - Introduce Heuristic Evaluation - Describe UARs - Time at the end to fill out the [course eval](https://uw.iasystem.org/survey/227053) --- .left-column[ ## Introducing Heuristic Evaluation] .right-column[ .quote[Discount usability engineering methods] -- Jakob Nielsen Involves a small team of evaluators to evaluate an interface based on recognized usability principles Heuristics–”rules of thumb” ] ??? "serving to discover or find out," 1821, irregular formation from Gk. heuretikos "inventive," related to heuriskein "to find" (cognate with O.Ir. fuar "I have found"). Heuristics "study of heuristic methods," first recorded 1959. --- .left-column[ ## Introducing Heuristic Evaluation] .right-column[ First introduced in 1990 by Nielsen & Molich Quick, inexpensive, popular technique ~5 experts find 70-80% of problems n Based on 10 heuristics Does not require working interface ] --- <div class="mermaid"> gantt title Typical Heuristic Evaluation Process section Evaluation dateFormat DD Designer Provides Setting (Description of Interface and List of Tasks) :list, 22, 5d 5ish evaluators Try tasks and record problems :evals, after list, 5d Designer conducts synthesis and analysis :synthesis, 28, 5d Designer writes report :30, 5d </div> --- .left-column[ ## So what are the heuristics?] -- .right-column[ - H1: Visibility of system status - H2: Match between system and the real world - H3: User control and freedom - H4: Consistency and standards - H5: Error prevention - H6: Recognition vs. recall - H7: Flexibility and efficiency of use - H8: Aesthetic and minimalist design - H9: Error recovery - H10: Help and documentation ] ??? These should not be hugely surprising after everything we've talked about... --- .left-column[ ## H1: Visibility of System Status Keep users informed about what is going on ] .right-column[ What does this interface tell you?  ] -- .right-column[ - What input has been received--Does the interface above say what the search input was? - What processing it is currently doing--Does it say what it is currently doing? - What the results of processing are--Does it give the results of processing? Feedback allows user to monitor progress towards solution of their task, allows the closure of tasks and reduces user anxiety (Lavery et al) ] --- .left-column[ ## H2: Match between system and real world Speak the users’ language Follow real world conventions ] .right-column[  - Use concepts, language and real-world conventions that are familiar to the user. - Developers will need to understand the task from the point of view of users. - Cultural issues relevant for the design of systems that are expected to be used globally. A good match minimizes the extra knowledge required to use the system, simplying all task action mappings (re-expression of users’ intuitions into system concepts) ] --- .left-column[ ## H2: Match between system and real world Example of a huge violation of this H2 ] .right-column[ | | | |--|--| |  |Possibly the biggest usability in problem in the Macintosh. Heuristic violation--people want to get their disk out of the machine--not discard it.| ] --- .left-column[ ## H2: Match between system and real world Example of a mismatch depends on knowledge about users ] .right-column[ Would an icon with a red flag for new mail be appropriate in all cultures?  ] --- .left-column[ ## H3: User Control and Freedom “Exits” for mistaken choices, undo, redo Don’t force down fixed paths ] .right-column[  Users choose actions by mistake ] --- .left-column[ ## H4: Consistency and Standards ] .right-column[  Same words, situations, actions, should mean the same thing in similar situations; same things look the same, be located in the same place. Different things should be different ] --- .left-column[ ## H4: Consistency and Standards ] .right-column[ - Both H2 (Match between system and the real world) and H4 related to user’s prior knowledge. The difference is - H2 is knowledge of world - H4 of knowledge others parts of application and other applications on the same platform. - Consistency within an application and within a platform. Developers need to know platform conventions. - Consistency with old interface Consistency maximizes the user knowledge required to use the systems by letting users generalize from existing experience of the system to other systems ] --- .left-column[ ## H4: Consistency and Standards ] .right-column[ Evidence: Should include at least - two inconsistent elements in the same interface, or - an element that is inconsisten with a platform guideline Explanation: What inconsistent element is and what it is inconsistent with  ] --- .left-column[ ## H5: Error Prevention Careful design which prevents a problem from occurring in the first place ] .right-column[  - Help users select among legal actions (e.g., greying out inappropirate buttons) rather than letting them select and then telling them that they have made an error (gotcha!). - Subset of H1 (Visibility of system status) but so important it gets a separate heuristic. Motivation: Errors are a main source of frustration, inefficiency and ineffectiveness during system usage (Lavery et al) Explanation in terms of tasks and system details such as adjacency of function keys and menu options, discriminability of icons and labels. ] --- .left-column[ ## H6: Recognition Rather than Recall Make objects, actions and options visible or easily retrievable ] .right-column[  - Classic examples: - command line interfaces (`rm *`) - Arrows on keys that people can’t map to functions - Much easier for people to remember what to do if there are cues in the environment Goes into working memory through perceptions ] --- .left-column[ ## H7: Flexibility and Efficiency of Use Accelerators for experts (e.g., gestures, keyboard shortcuts) Allow users to tailor frequent actions (e.g., macros) ] .right-column[  - Typing single keys is typically faster than continually switching the hand between the keyboard and the mouse and point to things on the screen. - Skilled users develop plans of action, which they will want to execute frequently, so tailoring can capture these plans in the interface. ] --- .left-column[ ## H8: Aesthetic and Minimalist design Dialogs should not contain irrelevant or rarely needed information ] .right-column[  - Visual search--eyes must search through more. More (irrelevant info) interferes with Long Term Memory (LTM) retrieval of information that is relevant to task. - Cluttered displays have the effect of increasing search times for commands or users missing features on the screen (Lavery et al) _Chartjunk_ (Tufte): "The interior decoration of graphics generates a lot of ink that does not tell the viewer anything new." ] --- .left-column[ ## H9: Help users recognize, diagnose, and recover from errors ] .right-column[  - Error messages in language user will understand - Precisely indicate the problem - Constructively suggest a solution ] --- .left-column[ ## H10: Help and Documentation Easy to search Focused on the user’s task List concrete steps to carry out Always available ] .right-column[  Allow search by gist--people do not remember exact system terms ] ??? If user ever even knew system terms. --- <div class="mermaid"> gantt title Typical Heuristic Evaluation Process section Evaluation dateFormat DD Designer Provides Setting (Description of Interface and List of Tasks) :list, 22, 5d 5ish evaluators Try tasks and record problems :evals, after list, 5d </div> Why 5 or more people? -4 or 5 are recommended by Nielsen (this is a point of contention. I aim for *saturation*). - A single person will not be able to find all usability problems - Different people find different usability problems - Successful evaluators may find both easy and hard problems ??? You can estimate how many you need (see NM book, pp 32-35). 4 or 5 are recommended by Nielsen --- <div class="mermaid"> gantt title Typical Heuristic Evaluation Process section Evaluation dateFormat DD Designer Provides Setting (Description of Interface and List of Tasks) :list, 22, 5d 5ish evaluators Try tasks and record problems :evals, after list, 5d </div> How should the Designer "provide a setting"? How should the evaluator evaluate? --- <div class="mermaid"> gantt title Typical Heuristic Evaluation Process section Evaluation dateFormat DD Designer Provides Setting (Description of Interface and List of Tasks) :list, 22, 5d 5ish evaluators Try tasks and record problems :evals, after list, 5d </div> .left-column[ ## What does the evaluator do?] .right-column[ Designer: Briefing (HE method, Domain, Scenario) Evaluator: - Two passes through interface (video in our case) - Inspect flow - Inspect each screen, one at a time against heuristics - Fill out a *Usability Action Report* (we'll keep this simple in peer review) ] ??? NOT a single-user empirical test that is, do not say “I tried it and it didn’t work therefore I’ll search for a heuristic this violates” --- <div class="mermaid"> gantt title Typical Heuristic Evaluation Process section Evaluation dateFormat DD Designer Provides Setting (Description of Interface and List of Tasks) :list, 22, 5d 5ish evaluators Try tasks and record problems :evals, after list, 5d </div> .left-column[ ## Usability Action Report ] .right-column[ UAR rather than “Problem report” because you report good aspects as well as problems -- you want to preserve them in the next iteration of the system! - **UAR Identifier** (Type-Number) Problem or Good Aspect - *Describe:* Succinct description of the usability aspect - *Heuristics:* What heuristics are violated - **Evidence:** support material for the aspect - **Explanation:** your own interpretation - *Severity:* your reasoning about importance - **Solution:** if the aspect is a problem, include a possible solution and potential trade-offs - **Relationships:** to other usability aspects (if any) ] ??? We'll ask you to do the things in italics in peer grading --- <div class="mermaid"> gantt title Typical Heuristic Evaluation Process section Evaluation dateFormat DD Designer Provides Setting (Description of Interface and List of Tasks) :list, 22, 5d 5ish evaluators Try tasks and record problems :evals, after list, 5d </div> .left-column[ ## Writing a description] .right-column[ Should be *A PROBLEM*, not a solution Don't be misleading (e.g., “User couldn’t find state in the list” when the state wasn’t in the list) Don't be overly narrow (e.g., “PA not listed” when there is nothing special about PA and other states are not listed) Don't be too broad, not distinctive (e.g., “User can’t find item”) ] --- <div class="mermaid"> gantt title Typical Heuristic Evaluation Process section Evaluation dateFormat DD Designer Provides Setting (Description of Interface and List of Tasks) :list, 22, 5d 5ish evaluators Try tasks and record problems :evals, after list, 5d </div> .left-column[ ## Picking a Heuristic] .right-column[ Ok to list more than one This is subjective. Use your best judgement ] --- <div class="mermaid"> gantt title Typical Heuristic Evaluation Process section Evaluation dateFormat DD Designer Provides Setting (Description of Interface and List of Tasks) :list, 22, 5d 5ish evaluators Try tasks and record problems :evals, after list, 5d </div> .left-column[ ## Deciding on a severity] .right-column[ - Make a claim about factors and support it with reasons - Consider *Frequency* (e.g., All users would probably experience this problem because…) - Consider *Impact* Will it be easy or hard to overcome. NOT is the task the user is doing important (put importance of task in explanation and in justification of weighting, if relevant). - Consider *Persistence* Once the problem is known, is it a one-time problem or will the user be continually bothered? NOT low persistence because the user abandons goal (that is impact--can’t overcome it. If can’t detect and can’t overcome, problem persists). ] ??? Why? Make claim (All users would PROBABLY experience this problem BECAUSE…) and IMMEDIATELY --- <div class="mermaid"> gantt title Typical Heuristic Evaluation Process section Evaluation dateFormat DD Designer Provides Setting (Description of Interface and List of Tasks) :list, 22, 5d 5ish evaluators Try tasks and record problems :evals, after list, 5d </div> .left-column[ ## Rating Severity 5-point scale ] .right-column[ 0 - Not a problem at all (or a good feature) 1 - Cosmetic problem only 2 - Minor usability problem (fix with low priority) 3 - Major usability problem (fix with high priority) 4 - Usability catastrophe (imperative to fix before release) ] --- <div class="mermaid"> gantt title Heuristic Evaluation Process section Evaluation dateFormat MM-DD Designer Provides Setting (>Description of Interface and List of Tasks) :list, 03-02, 9d Videos distributed :vids, after list, 1d Tasks--5 evaluators :evals, after vids, 2d Section Problems E1--Problem1... :after vids, 2d E1--Problem2... :after vids, 2d E2--Problem3... :after vids, 2d ... :after vids, 2d Section Synthesis and Analysis Group like problems :analysis, 03-14, 2d Summarize problems :summarize, after analysis, 2d Write report :after summarize, 1d </div> Group like problems - Important thing is whether they have similar description - This is for you to decide - Similarity may be conceptual (e.g. the same problem may show up in multiple parts of your interface) --- <div class="mermaid"> gantt title Heuristic Evaluation Process section Evaluation dateFormat MM-DD Designer Provides Setting (>Description of Interface and List of Tasks) :list, 03-02, 9d Videos distributed :vids, after list, 1d Tasks--5 evaluators :evals, after vids, 2d Section Problems E1--Problem1... :after vids, 2d E1--Problem2... :after vids, 2d E2--Problem3... :after vids, 2d ... :after vids, 2d Section Synthesis and Analysis Group like problems :analysis, 03-14, 2d Summarize problems :summarize, after analysis, 2d Write report :after summarize, 1d </div> Summarize problems - Average severities - List all relevant heuristics - List all areas of website affected - Also prioritize at this point --- <div class="mermaid"> gantt title Heuristic Evaluation Process section Evaluation dateFormat MM-DD Designer Provides Setting (>Description of Interface and List of Tasks) :list, 03-02, 9d Videos distributed :vids, after list, 1d Tasks--5 evaluators :evals, after vids, 2d Section Problems E1--Problem1... :after vids, 2d E1--Problem2... :after vids, 2d E2--Problem3... :after vids, 2d ... :after vids, 2d Section Synthesis and Analysis Group like problems :analysis, 03-14, 2d Summarize problems :summarize, after analysis, 2d Write report :after summarize, 1d </div> Write report We've provided a [template](../../assignments/undo-report) --- .left-column[ ## Advantages of HE] .right-column[ “Discount usability engineering” Intimidation low Don’t need to identify tasks, activities Can identify some fairly obvious fixes Can expose problems user testing doesn’t expose Provides a language for justifying usability recommendations ] --- .left-column[ ## Disadvantages of HE] .right-column[ Un-validated Unreliable Should use usability experts Problems unconnected with tasks Heuristics may be hard to apply to new technology Coordination costs ] --- .left-column[ ## Summary] .right-column[ Heuristic Evaluation can be used to evaluate & improve user interfaces 10 heuristics Heuristic Evaluation process Individual: Flow & screens Group: Consensus report, severity Usability Aspect Reports Structured way to record good & bad ] --- # Hall of Shame? Cycling back: What would you say in your HE of this interface? ![:youtube Video of funimation problems, 1zDMh3NHDjw] --- # Hall of Shame? Cycling back: What would you say in your HE of this interface? .left-column50[  ] .right-column40[   ]